11.30.2008 14:32

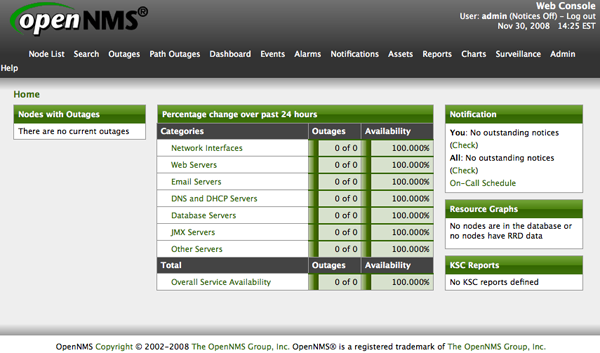

opennms

I was going to look at nagios for

fink and look into adding checks for my tide stations, but I

figured that since OpenNMS is

already in fink and one

of the fink maintainers also works on OpenNMS, it might be

worth giving it a try on my Mac laptop.

Above, you can see my comment about the postgresql restart. What's up with this?

% fink describe opennms % less /sw/share/doc/opennms/README.Darwin % fink install opennms % fink install tomcat5 # Did a really need this one? % sudo emacs /sw/var/postgresql-8.3/data/pg_hba.conf # Realize that the file looks to be already configured % sudo emacs /sw/var/postgresql-8.3/data/postgresql.conf listen_addresses = 'localhost' # what IP address(es) to listen on; max_connections = 192 # (change requires restart) % sudo daemonic enable postgresql83This is the Mac OS X 10.5:

% sudo emacs /etc/sysctl.conf kern.sysv.shmmax=16777216 kern.sysv.shmmin=1 kern.sysv.shmmni=64 kern.sysv.shmseg=8 kern.sysv.shmall=32768Once the above parameters are set, you need to reboot the mac.

% #cd /sw/bin && sudo pgsql.sh-8.3 restart # Not needed with reboot # There is something weird with my postgresql setup... # Lots of complaints on restart % sudo -u postgres createdb -U postgres -E UNICODE opennms % install_iplike_83.sh % sudo /sw/var/opennms/bin/install -dis % sudo /sw/bin/tomcat5 start % sudo opennms start % open http://localhost:8980/opennms/ U: admin, P: adminThen, when you are ready to have an OpenNMS service always running, do this:

% sudo daemonic enable opennms

Above, you can see my comment about the postgresql restart. What's up with this?

% sudo pgsql.sh-8.3 restart pg_ctl-8.3: PID file "/sw/var/postgresql-8.3/data/postmaster.pid" does not exist Is server running? starting server anyway shell-init: error retrieving current directory: getcwd: cannot access parent directories: Permission denied server starting LOG: 00000: could not identify current directory: Permission denied LOCATION: find_my_exec, exec.c:196 FATAL: XX000: /sw/bin/postgres-8.3: could not locate my own executable path LOCATION: PostmasterMain, postmaster.c:470

11.30.2008 12:48

bdec xml description of binary files

bdec is a python project

that defines a XML definition of a binary file format and allows

programs to read the data. If only it handled non byte aligned data

such as AIS binary.

Writing decoders for binary formats is typically tedious and error

prone. Binary formats are usually specified in text documents that

developers have to read if they are to create decoders, a time

consuming, frustrating, and costly process.

While there are high level markup languages such as ASN.1 for

specifying formats, few specifications make use of these languages,

and such markup languages cannot be retro-fitted to existing binary

formats. 'bdec' is an attempt to specify arbitrary binary formats in a

markup language, and create decoders automatically for that binary

format given the high level specification.

Bdec can:

* Allow specifications to be easily written, updated, and maintained.

* Decode binary files directly from the specification.

* Generate portable, readable, and efficient C decoders.

* Provide rudimentary encoding support.

* Run under Windows & Unix operating systems

The bdec xml specification uses constructs loosely based on those

found in ASN.1.

Example description:

<protocol>

<sequence name="png">

<field name="signature" length="64" type="hex" value="0x89504e470d0a1a0a" />

<sequenceof name="chunks">

<reference name="unknown chunk" />

</sequenceof>

</sequence>

<common>

<sequence name="unknown chunk">

<field name="data length" length="32" type="integer" />

<field name="type" length="32" type="hex" />

<field name="data" length="${data length} * 8" type="hex" />

<field name="crc" length="32" type="hex" />

</sequence>

</common>

</protocol>

11.30.2008 09:55

Univ of Alaska - Autonmous Aircraft

Autonmous aircraft make a lot of

sense for certain tasks. If a ship can carry a bunch of them, then

they can act as a force multiplier. Additionally, by not having to

support a person onboard the aircraft, it can be made small enough

to be easily transported as needed. For science applications,

typical payloads might consist of 3-axis magnetometers, bathy or

topo lidar (laser ranging), weather & chemical sensors and

cameras. Especially interesting is the idea of the airborn

magnetometers. A magnetometer must be towed a long ways behind the

ship thus inducing operational issues and layback positionitional

issues. Additionally, I've experienced loosing one in ice.

Univ. of Alaska testing two autonomous aircraft on the NOAA ship Oscar Dyson in October:

Jeff Gee has been pushing the magnetometer idea for a long time.

Univ. of Alaska testing two autonomous aircraft on the NOAA ship Oscar Dyson in October:

Jeff Gee has been pushing the magnetometer idea for a long time.

11.29.2008 16:44

Call for Computer Science articles for Wikipedia

There is a call for new and improved

articles on Computer Science in Wikipedia:

Wanted: Better Wikipedia coverage of theoretical computer science [Scott Aaronson]

Wanted: Better Wikipedia coverage of theoretical computer science [Scott Aaronson]

# Property testing # Quantum computation (though Quantum computer is defined) # Algorithmic game theory # Derandomization # Sketching algorithms # Propositional proof complexity (though Proof complexity is defined) # Arithmetic circuit complexity # Discrete harmonic analysis # Streaming algorithms # Hardness of approximation ...

11.28.2008 16:13

vimeo

At Loic's suggestion, I checked out

Vimeo for HD video. The

sorce video is 1024 wide, so it's only half HD. The text is

definitely more readable. There is a full screen mode, but the

artifacting is pretty strong.

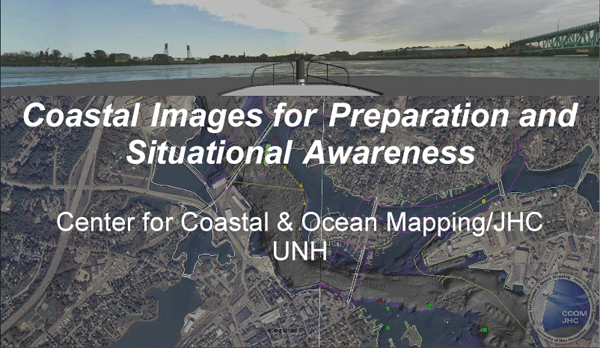

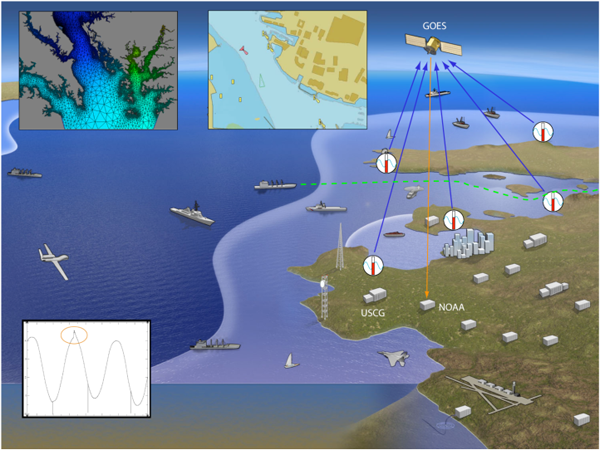

Coastal Images for Preparation and Situational Awareness from Kurt Schwehr on Vimeo.

Coastal Images for Preparation and Situational Awareness from Kurt Schwehr on Vimeo.

11.28.2008 08:14

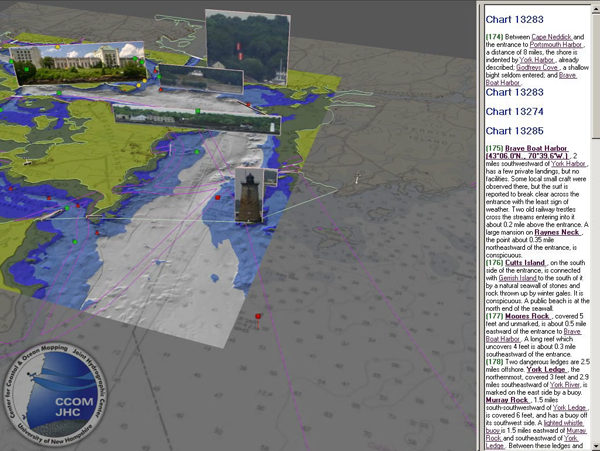

Chart of the Future Videos on YouTube

Here are a couple videos from the

CCOM Visualization Lab at CCOM produced by Matt Plumlee. YouTube

doesn't make for the highest resolution videos, but at least they

are published someplace.

Coastal Images for Preparation and Situational Awareness

Tide Aware Path Planning

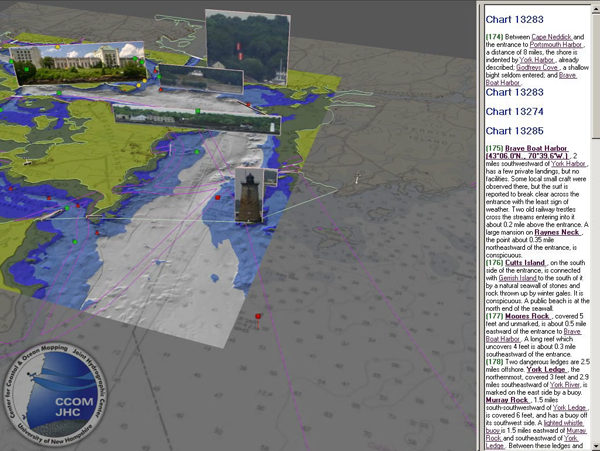

GeoCoastPilot - Early version from December 2007. Note that the new interface is quite different. Formerly known as the Digital Coast Pilot.

I also threw the movie I made of the Costco Busan radar display on YouTube.

Costco Busan Radar

Coastal Images for Preparation and Situational Awareness

Tide Aware Path Planning

GeoCoastPilot - Early version from December 2007. Note that the new interface is quite different. Formerly known as the Digital Coast Pilot.

I also threw the movie I made of the Costco Busan radar display on YouTube.

Costco Busan Radar

11.27.2008 07:03

USACE contract with Woods Hole Group

Note: this is not WHOI.

Environmental Planning for USACE

Environmental Planning for USACE

Woods Hole Group has been awarded a contract by the United States Army Corps of Engineers (USACE) New England District to provide environmental planning and consulting services for various projects located throughout the 14 northeastern states from Maine to Virginia and the nation's capital. The contract can extend for up to five years, with an estimated budget of USD15 million. ... The scope of work will cover the USACE's North Atlantic Division mission area, which includes the New England District, as well as USACE Districts in New York, Philadelphia, Baltimore and Norfolk (VA, USA). The North Atlantic Division is one of eight Army Corps of Engineers regional business centres. As a regional business centre, the division designs, builds and maintains projects to support the military, develop and protect the nation's water resources, and restore, protect and enhance the environment. The work will be diverse and include the following: * Environmental impact reports/statements * Dredging/dredge material management * Multi-disciplinary environmental measurements and monitoring * Oceanography * Risk assessments * Hazardous and toxic waste site monitoring and remediation planning * Other specialised studies

11.26.2008 12:42

mbsystem in fink for PPC

I got a little to clever for my own

good...

Here are some options. First is 'uname -m'

% fink install mbsystem #!/bin/bash -ve # NOTE: do not set -e for bash in the line above. That will break the grep perl -pi.bak -e 's|\@FINKPREFIX\@|/sw|g' install_makefiles cpp -dM /dev/null | grep __LITTLE_ENDIAN__ ### execution of /var/tmp/tmp.1.TrwYax failed, exit code 1On PPC, the little endian check fails and grep has an exit status of 1. This is fine, but bash then sees it as an error and quits with a failure. Sigh.

Here are some options. First is 'uname -m'

big endian machine % uname -m Power Macintosh little endian machine $ uname -m i386That works, but if uname -m ever changes, we are sunk. Here is my new, more robust endian check that doesn't require actually compiling anything.

big endian ppc machine % cpp -dM /dev/null | grep -i ENDIAN__ | cut -d_ -f 3 BIG little endian i386 machine % cpp -dM /dev/null | grep -i ENDIAN__ | cut -d_ -f 3 LITTLEOf course, now I'm getting mbsystem failing to build. I thought I had mbsystem not building with OpenGL stuff...

mbview_callbacks.c:1719: warning: too many arguments for format mbview_callbacks.c:1720: warning: format '%f' expects type 'double', but argument 4 has type 'int' mbview_callbacks.c:1720: warning: too many arguments for format mbview_callbacks.c:1775: error: 'GLwNrgba' undeclared (first use in this function) mbview_callbacks.c:1775: error: (Each undeclared identifier is reported only once mbview_callbacks.c:1775: error: for each function it appears in.) mbview_callbacks.c:1775: warning: left-hand operand of comma expression has no effect mbview_callbacks.c:1777: error: 'GLwNdepthSize' undeclared (first use in this function) mbview_callbacks.c:1777: warning: left-hand operand of comma expression has no effect mbview_callbacks.c:1779: error: 'GLwNdoublebuffer' undeclared (first use in this function)

11.25.2008 16:49

Simple structure and pointer ctypes example

I needed a more straightforward

example of a pointer to a structure being returned from a function

call to practice ctypes on. So, I made one and here is what I did.

There wasn't anything quite like it in the ctypes

tutorial.

First the C code. I didn't want to deal with malloc / free, so I just put an instance of the structure (sample2) in the scope of the shared object.

First the C code. I didn't want to deal with malloc / free, so I just put an instance of the structure (sample2) in the scope of the shared object.

// Build like this on the mac: gcc -g -Wall -bundle -undefined dynamic_lookup test_ctypes.c -o _test_ctypes.so

struct two {

int g;

float h;

};

typedef struct two *two_ptr;

struct two sample2;

two_ptr return_a_two (void) {

sample2.g = 123;

sample2.h = 456.789;

return &sample2;

}

And the python ctypes interface.

#!/usr/bin/env python

import ctypes

lib = ctypes.CDLL('_test_ctypes.so')

class struct_two(ctypes.Structure):

_fields_ = [

('g',ctypes.c_int),

('h',ctypes.c_float)

]

return_a_two = lib.return_a_two

return_a_two.restype = ctypes.POINTER(struct_two)

if __name__ == '__main__':

ptr = return_a_two()

print ptr.contents.g

print ptr.contents.h

When run, it prints out the value of the two members of the

structure.

% ./test_ctype.py 123 456.789001465Now to figure out the actual structure of magic_set of file.

11.25.2008 15:49

New SIO building

Scripps Gets $12m for New Building [mtr]

Scripps Institution of Oceanography at UC San Diego has been awarded $12 million by the U.S. Department of Commerce (DoC)/National Institute of Standards and Technology (NIST) to construct a new laboratory building on the Scripps campus for research on marine ecosystem forecasting. This new building will become a resource for marine ecological research at Scripps and for other national and international ocean science organizations that address the management of marine resources. The new research building at Scripps will house the Marine Ecosystem Sensing, Observation and Modeling (MESOM) Laboratory. Scripps, a leader in research on climate change and its impacts on marine ecosystems, is a research institution and graduate/undergraduate school at UC San Diego. ...

11.25.2008 15:43

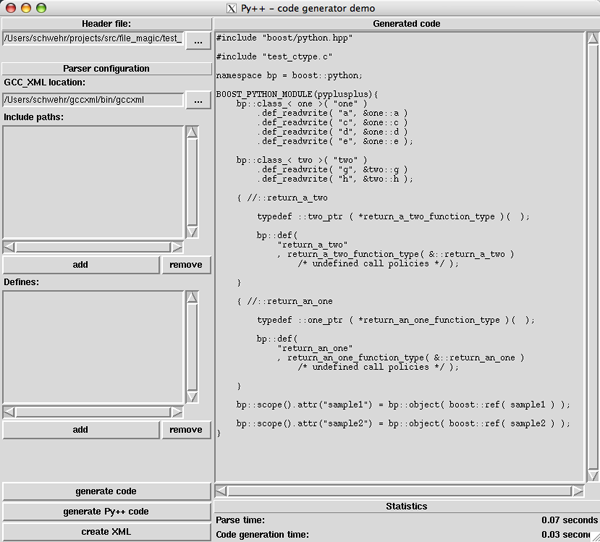

Py++ generating boost interfaces for python

I tried yet another tool. This one

uses gccxml to parse C or C++ and output a boost python interface:

Py++.

I install gccxml and py++ and ran a test case against it. It works,

but I don't really want to use boost for what I'm doing.

Time to go back to hand coding.

Time to go back to hand coding.

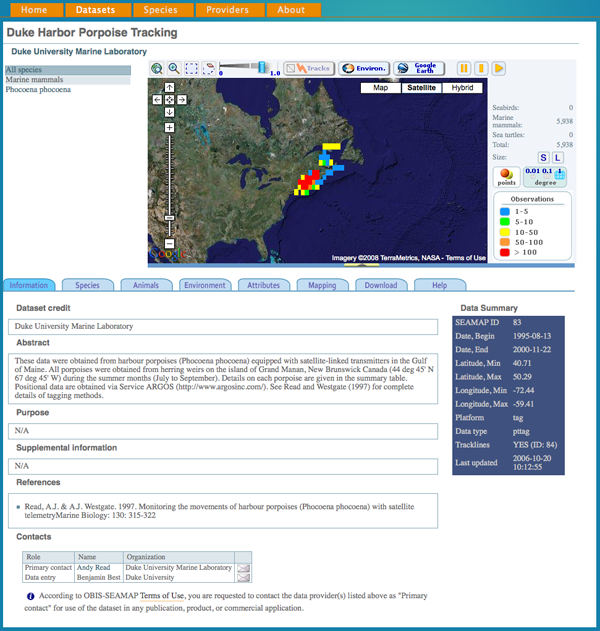

11.25.2008 13:56

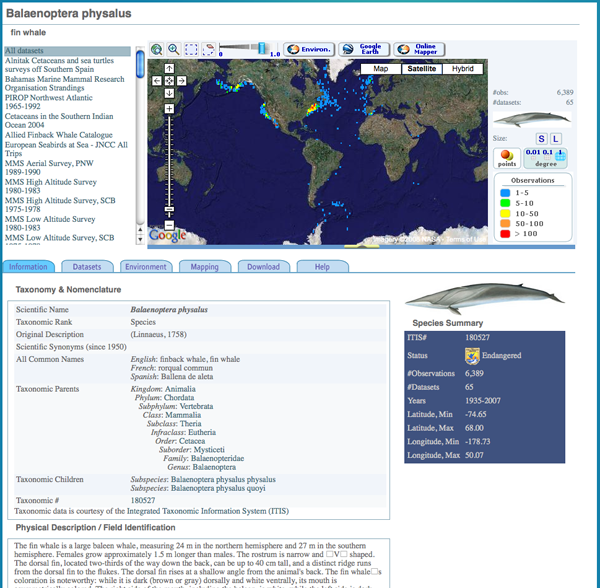

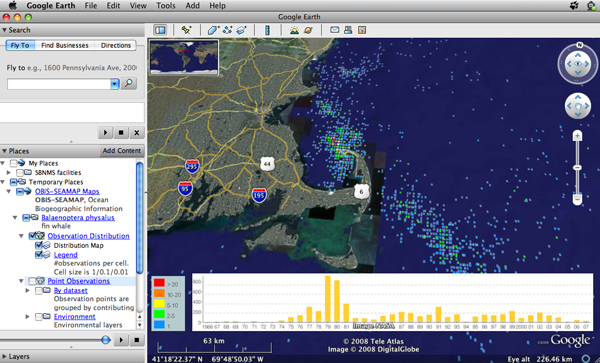

OBIS-SEAMAP datasets

The Google Earth Blog today pointed

to

Ocean Biogeographic Maps in Google Earth

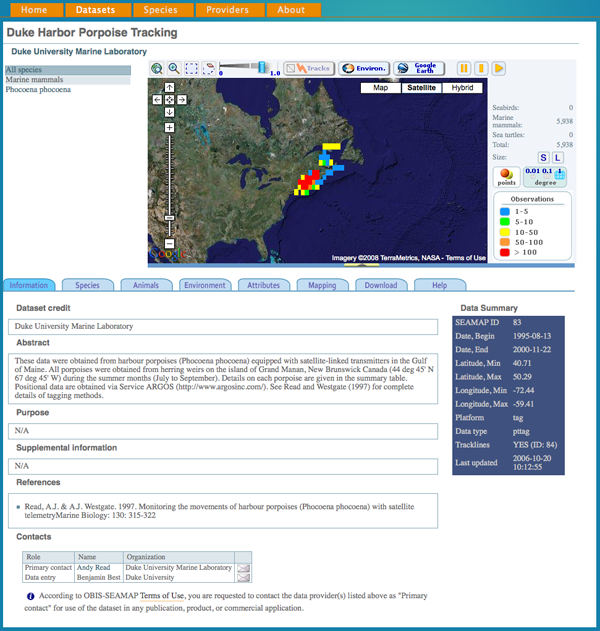

By dataset... e.g. Duke Harbor Porpoise Tracking

By species... I notice there are no right whale entries. Balaenoptera physalus - fin whale

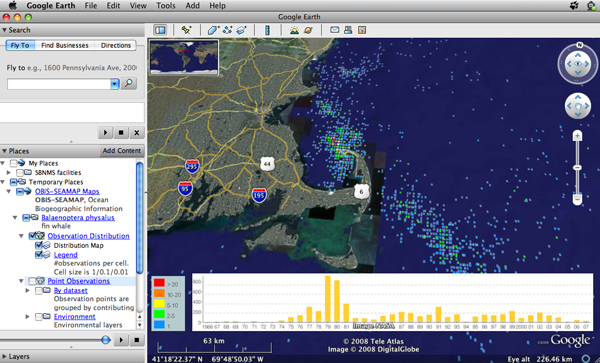

And the fin whale in Google Earth... click on mapping to get access.

The also have WMS and WFS feeds.

OBIS-SEAMAP, Ocean Biogeographic Information System Spatial Ecological Analysis of Megavertebrate Populations, is a spatially referenced online database, aggregating marine mammal, seabird and sea turtle data from across the globe. The collection can be searched and visualized through a set of advanced online mapping applications.Check out the restrictions on the site. The first usage restriction is a bit weird. I am surprised that you have to ask permission for publications. I get the commercial restriction and the citation.

* Not to use data contained in OBIS-SEAMAP in any publication, product, or commercial application without prior written consent of the original data provider. * To cite both the data provider and OBIS-SEAMAP appropriately after approval of use is obtained. ...

By dataset... e.g. Duke Harbor Porpoise Tracking

By species... I notice there are no right whale entries. Balaenoptera physalus - fin whale

And the fin whale in Google Earth... click on mapping to get access.

The also have WMS and WFS feeds.

11.25.2008 10:42

Seacoast NH farmers' markets

Last weekend, Dover had an excellent

farmers' market in the McIntosh Atlantic Culinary Academy. I picked

up some really nice locally raised meats. For the info on local

farmers' markets, check out Seacoast Eat Local

Now if it would just stop storming...

Now if it would just stop storming...

11.25.2008 09:49

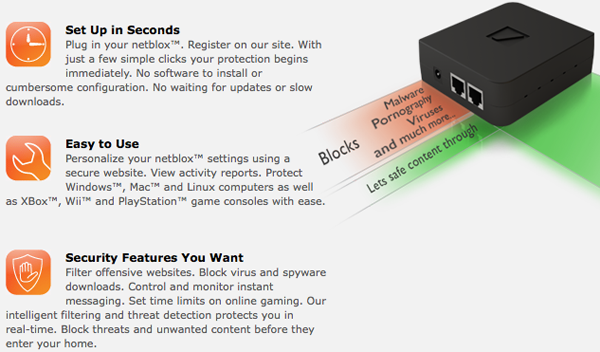

Zort Labs netblox

Several folks from CCOM (Brian L.,

Jim C., and Nathan P.) have left to start a company: ZortLabs. Their product is called

netblox, which is a small box that filters internet traffic before

it gets to your computer.

11.25.2008 08:00

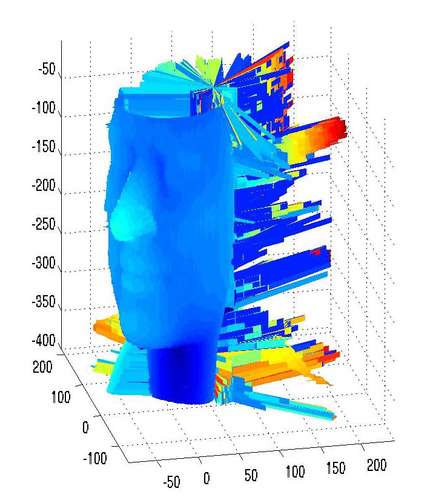

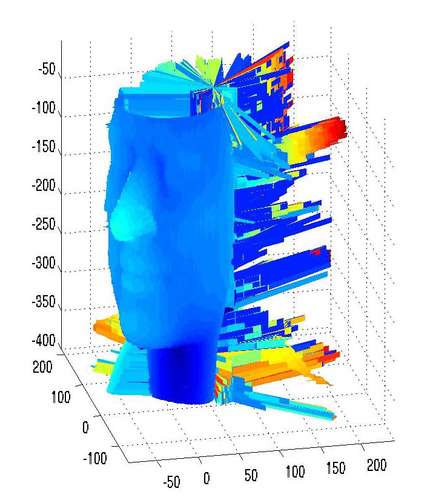

Home brew laser scanner

Why is it that I always loose my

laser pointer? I got this link from Peter Selkin. Thanks Peter!

3-D

Laser Scanner. [instructables] Requires a laser

pointer, wine glass, and a imaging device (aka the iSight camera

built into your mac laptop). The website even provides some matlab

code to get you going. Errr... never look into the laser.

Know How to Make a 3-D Scanner

Know How to Make a 3-D Scanner

11.25.2008 07:15

ctypes python interface built by pyglet

I've been looking at python ctypes

code generators a bit. The idea is to look at the C header and get

most of the work done automatically to code the functions,

structures, and #defines. Then I can focus on making a pythonic

interface. The first one to work is

wraptypes in pyglet.

However, the release does not seem to contain wraptype, so I had to

pull it from svn.

% mkdir pyglets % cd pyglets % svn co http://pyglet.googlecode.com/svn/trunk/tools/wraptypes % cd wraptypes % chmod +x wrap.py % ./wrap.py magic.hI've cut down the output some, but it shows that I can use some of the code it generates.

__docformat__ = 'restructuredtext'

import ctypes

from ctypes import *

import pyglet.lib

_lib = pyglet.lib.load_library('magic')

_int_types = (c_int16, c_int32)

if hasattr(ctypes, 'c_int64'):

_int_types += (ctypes.c_int64,)

for t in _int_types:

if sizeof(t) == sizeof(c_size_t):

c_ptrdiff_t = t

class c_void(Structure):

_fields_ = [('dummy', c_int)]

MAGIC_NONE = 0 # magic.h:32

MAGIC_DEBUG = 1 # magic.h:33

MAGIC_SYMLINK = 2 # magic.h:34

MAGIC_COMPRESS = 4 # magic.h:35

MAGIC_DEVICES = 8 # magic.h:36

# ...

class struct_magic_set(Structure):

__slots__ = [

]

struct_magic_set._fields_ = [

('_opaque_struct', c_int)

]

class struct_magic_set(Structure):

__slots__ = [

]

struct_magic_set._fields_ = [

('_opaque_struct', c_int)

]

magic_t = POINTER(struct_magic_set) # magic.h:62

# magic.h:63

magic_open = _lib.magic_open

magic_open.restype = magic_t

magic_open.argtypes = [c_int]

# magic.h:68

magic_buffer = _lib.magic_buffer

magic_buffer.restype = c_char_p

magic_buffer.argtypes = [magic_t, POINTER(None), c_size_t]

# ...

__all__ = ['MAGIC_NONE', 'MAGIC_DEBUG', 'MAGIC_SYMLINK', 'MAGIC_COMPRESS',

'MAGIC_DEVICES', 'MAGIC_MIME_TYPE', 'MAGIC_CONTINUE', 'MAGIC_CHECK',

'MAGIC_PRESERVE_ATIME', 'MAGIC_RAW', 'MAGIC_ERROR', 'MAGIC_MIME_ENCODING',

'MAGIC_MIME', 'MAGIC_NO_CHECK_COMPRESS', 'MAGIC_NO_CHECK_TAR',

'MAGIC_NO_CHECK_SOFT', 'MAGIC_NO_CHECK_APPTYPE', 'MAGIC_NO_CHECK_ELF',

'MAGIC_NO_CHECK_ASCII', 'MAGIC_NO_CHECK_TOKENS', 'MAGIC_NO_CHECK_FORTRAN',

'MAGIC_NO_CHECK_TROFF', 'magic_t', 'magic_open', 'magic_close', 'magic_file',

'magic_descriptor', 'magic_buffer', 'magic_error', 'magic_setflags',

'magic_load', 'magic_compile', 'magic_check', 'magic_errno']

Other than the custom import and the opaque structs, I should be

able to use most of this.11.24.2008 17:54

mbsystem in fink

This has been coming up a lot lately.

Yes, I am still maintaining the fink install of MB-System. It

still is there and working (minus the OpenGL 3D graphics portion).

My fink packages: Fink - Package Database - Browse (Maintainer = 'Kurt Schwehr') and - MBSystem in fink

- install XCode

- installed the binary package from http://finkproject.org

- run "fink configure" and activate the "unstable" This edits /sw/etc/fink.conf and puts "unstable/main" on your "Trees:" line.

- run "fink selfupdate-rsync" (after the first time, you should just use "fink selfupdate"

- fink list mbsystem

- fink install mbsystem

- wait a good while as it builds everything

- run "dpkg -L mbsystem | grep bin" to see all the programs it installed.

less /sw/fink/10.4/unstable/main/finkinfo/sci/mbsystem.infoNote that 10.4 and 10.5 use a symbolic link so that the trees are the same.

My fink packages: Fink - Package Database - Browse (Maintainer = 'Kurt Schwehr') and - MBSystem in fink

11.22.2008 16:36

netflix on the XBox

Today we finally got to update the

XBox 360 and check out the big

update. It's very different. At first glance, the new UI is very

confusing. One very cool thing is that it now has Netflix built in. Add something to your

"play it now" list and it appears on your xbox list of movies after

a minute or two. Would be nice to be able to search all the

available movies, but it isn't that hard to just have your laptop

with you and select a movie that way. I was too lazy to hook up one

of my many computers to the TV, so this is a big help. The first

movie played started in absolutely horrible quality. But, after

hitting pause, making some popcorn, and coming back, the movie

restarted in full standard def NTSC video. I know... I'm still

using the same Sony 24" Trinitron that I bought in 1991 in Kimball

Hall.

And Microsoft totally ripped off the Wii Mii with the new avitars. The XBox and Wii are very different beasts and I have to say that the Wii is a smooth friendly interface whereas the XBox is full of eye candy and games that push what can be done with graphics, but is rough around the edges and sometimes the game interfaces are tough.

And Microsoft totally ripped off the Wii Mii with the new avitars. The XBox and Wii are very different beasts and I have to say that the Wii is a smooth friendly interface whereas the XBox is full of eye candy and games that push what can be done with graphics, but is rough around the edges and sometimes the game interfaces are tough.

11.22.2008 09:33

A sick mac?

A week ago, a mac that I have had

running since late 2005/2006 suddenly kernel panicked. The machine

hadn't crashed since I'd updated it to 10.4 afer a bad experience

with Microsoft's Virtual PC. It's a quad core 2.5GHz G5 with 8 GB

of RAM. Not fast compared to my laptop or mac mini, but a workhorse

that is currently my N-AIS box. I thought it was a fluke and

rebooted the machine. This week has seen 4 more kernel panicks with

shorter and shorter intervals between them. Today, I ran DiskWarrior on it and

rebuilt the directory. Then I went ahead and finally upgraded the

os to 10.5... this was my last 10.4 box. I didn't want to rock the

boat with data logging, but since the machine is unhappy, I might

as well update the OS now.

Data logging is working again and it's been a hour and a half from start to finish to install and update the OS. Now it is time to wait and see if the machine crashes.

6 hours later: the computer is still working.

Update 2008-25: The mac still seems to be doing well and it is much spunkier as a result of running Mac OSX 10.5.

Data logging is working again and it's been a hour and a half from start to finish to install and update the OS. Now it is time to wait and see if the machine crashes.

6 hours later: the computer is still working.

Update 2008-25: The mac still seems to be doing well and it is much spunkier as a result of running Mac OSX 10.5.

% uptime 7:37 up 2 days, 21:12, 4 users, load averages: 0.95 0.75 0.60

11.21.2008 10:53

AIS Binary Messages and Okeanos Explorer at sunset

eNavigation 2008 finished on Wednesday and yesterday we had the RTCM SC121 Working Group on Expanded Use of AIS in VTS (focusing on AIS Binary Broadcast Messages [BBM] at the moment) at the Port of Seattle, Pier 69 in Seattle. The RTCM meeting had some intense and good discussion on the Zone message that is now renamed to Timed Area Notice [TAN] to follow my convention and remove confusion with the word zone. We also started looking into the waterways management messages. We will be using the St Lawrence Seaway and European RIS messages as background material to support the our work. If you know of other waterway management AIS binary messages, please send them along.

Just because it is a cool picture...

11.20.2008 11:48

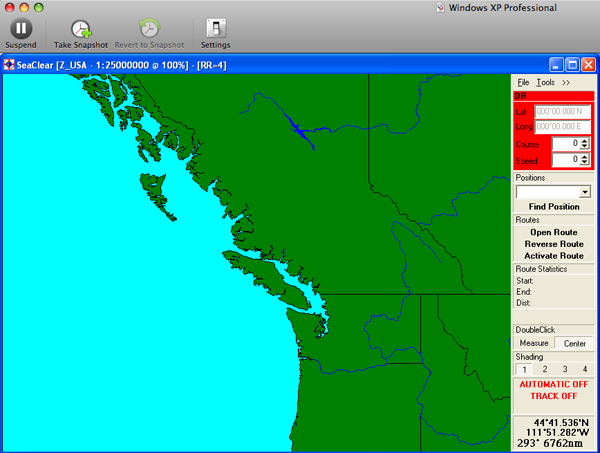

SeaClear chart

Brian T. pointed me to another free

charting application: SeaClear - PC Chart plotter and

GPS Navigation software. It includes support for AIS.

11.19.2008 15:57

python ctypes to wrap C code

I've looked at Python's ctypes

before, but this is the first time I've done this much. The

BSD/Darwin file command comes with a python interface, but it is

not very satifying. It's writen in CPython and is not

very flexible. I thought that I might be able to get ctypes to make

a simpler interface and allow for additional functionality to be

bolted on as I need it. First, here is the code:

I did have trouble with #defines for flags. I can't pull these from the shared library as they never get defined in the library. If these has been const int values, I could have imported them.

Compare this to just a little bit of the CPython code. Note that I am sure the CPython code will be slightly faster.

#!/usr/bin/env python

import ctypes

# FIX: make a loader that will be cross platform

libmagic = ctypes.CDLL('/sw/lib/libmagic.1.0.0.dylib')

libmagic.magic_file.restype = ctypes.c_char_p

MAGIC_NONE = 0x000000

MAGIC_DEBUG = 0x000001 # Turn on debugging

MAGIC_SYMLINK = 0x000002 # Follow symlinks

# ...

class Magic:

'Provide file identification survices'

def __init__(self, flags=MAGIC_NONE,filename=None):

self.cookie = libmagic.magic_open(flags)

result = libmagic.magic_load(self.cookie,filename)

def id(self,filename):

message = libmagic.magic_file(self.cookie,filename)

return message

def __del__(self):

libmagic.magic_close(self.cookie)

if __name__ == '__main__':

m = Magic()

print m.id('magic.py')

print m.id('does not exist')

Here is what the little demo outputs when run:

% ./magic.py a python script text executable cannot open `does not exist' (No such file or directory)I start off the code by pulling in the ctypes library. One trick is that the return code for all functions is assumed to be an integer. That works for everything here accept the magic_file call that returns a string. Therefore, I have to tell it that it restype is a c_char_p (aka a C string).

I did have trouble with #defines for flags. I can't pull these from the shared library as they never get defined in the library. If these has been const int values, I could have imported them.

Compare this to just a little bit of the CPython code. Note that I am sure the CPython code will be slightly faster.

static char _magic_file__doc__[] =

"Returns a textual description of the contents of the argument passed \

as a filename or None if an error occurred and the MAGIC_ERROR flag \

is set. A call to errno() will return the numeric error code.\n";

static PyObject* py_magic_file(PyObject* self, PyObject* args)

{

magic_cookie_hnd* hnd = (magic_cookie_hnd*)self;

char* filename = NULL;

const char* message = NULL;

PyObject* result = Py_None;

if(!(PyArg_ParseTuple(args, "s", &filename)))

return NULL;

message = magic_file(hnd->cookie, filename);

if(message != NULL)

result = PyString_FromString(message);

else

Py_INCREF(Py_None);

return result;

}

The py_magic.c is called on the python side like this:

#!/usr/bin/env python

import magic

ms = magic.open(magic.MAGIC_NONE)

ms.load()

ms.file("somefile")

11.19.2008 14:49

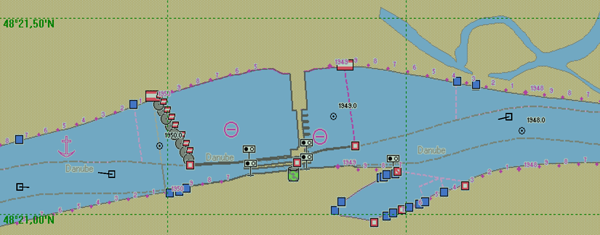

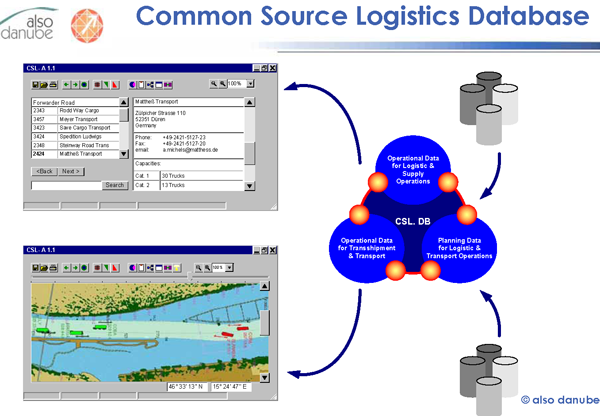

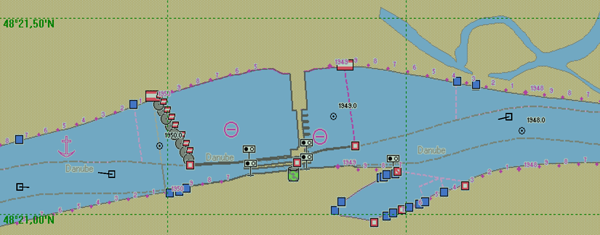

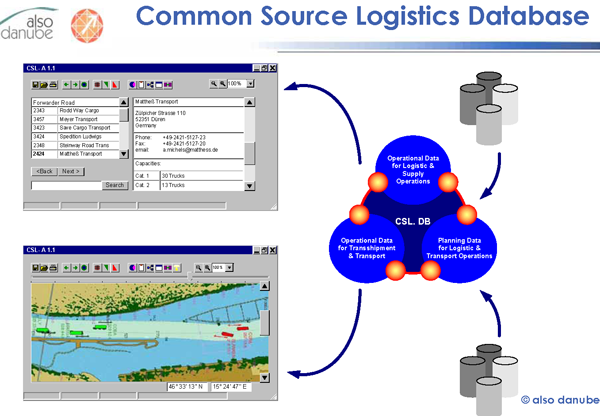

DoRIS

DoRIS is the Austrian River

Information System. Mario Sattler gave a presentation

entitled:

River Information Services come of Age - Shaping Tomorrow's Inland eNavigation with intelligent Infrastructure. A presentation of the application of the River Information System (RIS) to European rivers

From an older DoRIS presentation: DoRIS_Testcentre_.pdf

Implementation of River Information Services in Europe

River Information Services come of Age - Shaping Tomorrow's Inland eNavigation with intelligent Infrastructure. A presentation of the application of the River Information System (RIS) to European rivers

From an older DoRIS presentation: DoRIS_Testcentre_.pdf

Implementation of River Information Services in Europe

11.19.2008 11:35

Okeanos Explorer

Thanks to Elaine for a tour of the

Okeanos Explorer. Here are a couple images. The vessel has dynamic

positioning system (DP) and a Transas chart system.

Bridge communications systems:

HD over Internet2 (I2) is controlled here:

Bridge communications systems:

HD over Internet2 (I2) is controlled here:

11.19.2008 08:40

MapMyPage - add Google Maps popups

I gave MapMyPage a quick try. It does have

some funny things that is does. It took "US Coast Guard" and found

a random USCG facility.

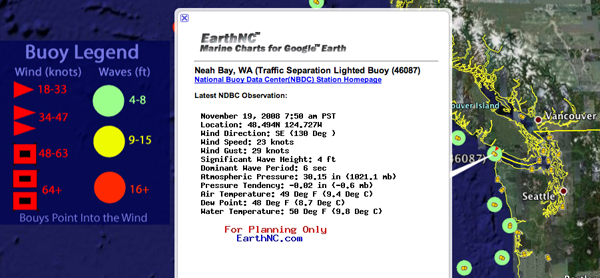

Found via Links: Where 2.0, Weather Buoys, Argentina, Earthscape, MapMyPage, and more [gearthblog]

Found via Links: Where 2.0, Weather Buoys, Argentina, Earthscape, MapMyPage, and more [gearthblog]

11.18.2008 16:05

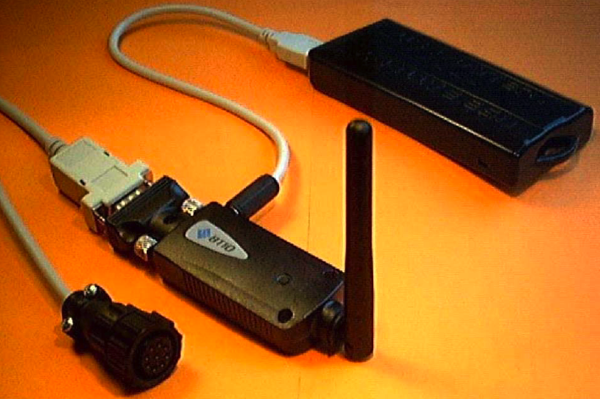

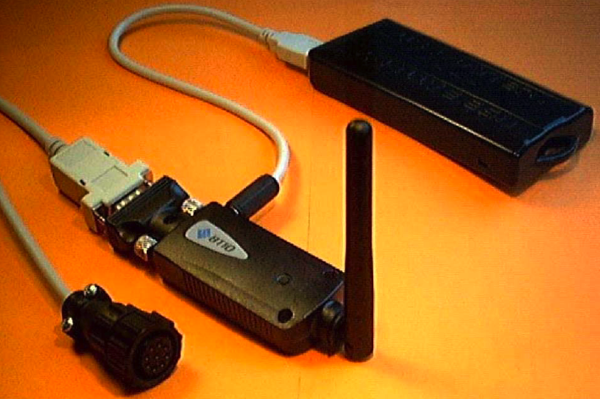

Bluetooth Pilot Interface

Captain Jorge Viso has these in his

talk today...

Turns out that Raven has exactly what I'm looking for:

Raven Industries Unveils Bluetooth Pilot Interface

TrueHeading also has one: Blue-Pilot.pdf

Turns out that Raven has exactly what I'm looking for:

Raven Industries Unveils Bluetooth Pilot Interface

Raven Industries, a manufacturer of lightweight Portable DGPS Pilot Systems, announced the introduction of the Bluetooth Pilot Interface (BPI): a Class 1 product that gives ship pilots a quality Bluetooth wireless device that transmits data from the ship's Pilot Port Interface (PPI) to a computer. The BPI features the Wire Wizard that automatically corrects mis- wired pilot plugs, a rechargeable battery, and small lightweight packaging. The integrated status lights, automatic baud-rate ...

TrueHeading also has one: Blue-Pilot.pdf

11.18.2008 14:07

Okeanos Explorer

I looked out the window from the

conference center at Pier 66 and saw the NOAA ship Okeanos

Explorer

11.18.2008 11:46

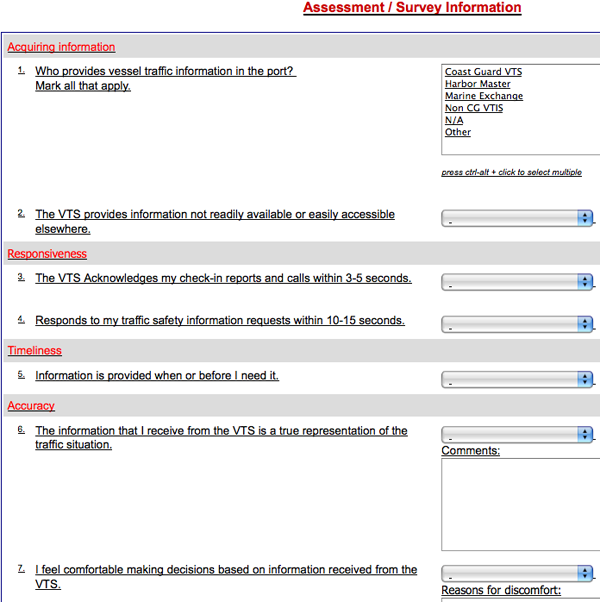

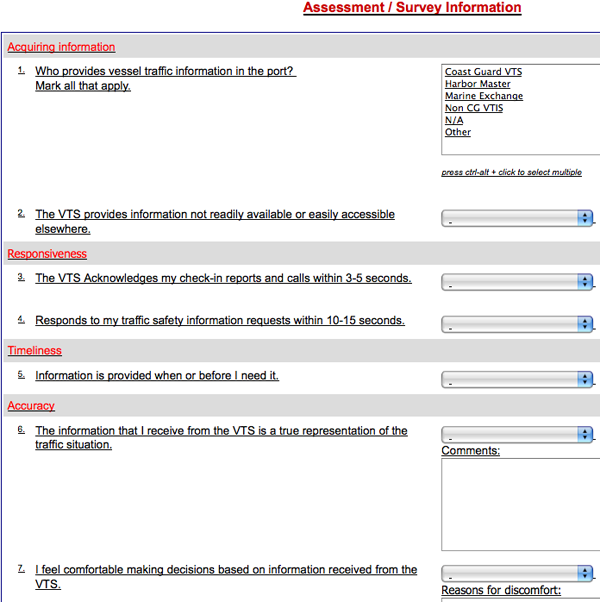

VTS Survey

During Brian Tetreault's presentation

on the Cosco Busan incident, he mentioned that the USCG is running

a survey of VTS users: USCG Customer

Satisfaction Survey of Vessel Traffic Services ... expires at

the end of Dec 2008. If you use a VTS, please go fill in the

survey.

11.18.2008 09:26

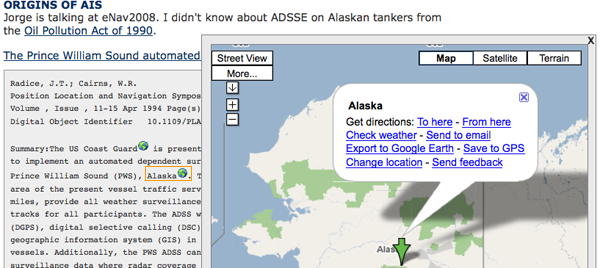

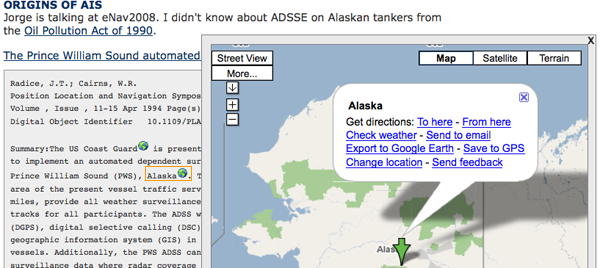

origins of AIS

Jorge is talking at eNav2008. I

didn't know about ADSSE on Alaskan tankers from the Oil

Pollution Act of 1990.

The Prince William Sound automated dependent surveillance system

The Prince William Sound automated dependent surveillance system

Radice, J.T.; Cairns, W.R. Position Location and Navigation Symposium, 1994., IEEE Volume , Issue , 11-15 Apr 1994 Page(s):823 - 832 Digital Object Identifier 10.1109/PLANS.1994.303397 Summary:The US Coast Guard is presently conducting a project in order to implement an automated dependent surveillance system (ADSS) in Prince William Sound (PWS), Alaska. The ADSS will expand the coverage area of the present vessel traffic service (VTS) by over 3000 square miles, provide all weather surveillance, and maintain well defined tracks for all participants. The ADSS will utilize differential GPS (DGPS), digital selective calling (DSC), and an electronic chart based geographic information system (GIS) in order to track participating vessels. Additionally, the PWS ADSS can integrate radar and dependent surveillance data where radar coverage is provided. This paper describes the impact of the PWS ADSS on current VTS systems, the forces behind ADS implementation, the ADS concept and design, along with suggested directions for ADS systems in the United StatesAlso, the new AIS rule coming soon will me that ALL vessels 65 feet or longer will have to have AIS. Also towing 26 feet or greater and 600 hps will need AIS.

11.18.2008 09:21

emacs dired - directory editor

I use dired in emacs fairly often.

It's easy to start... just C-x C-f to open a file and instead of a

file, select a directory name (e.g ".").

Emacs: Dired Directory Management [Greg Newman]

I'll have to check out DiredPlus. And I just saw that there is PyMacs for crossing between emacs lisp and python. (Pymacs framework

Emacs: Dired Directory Management [Greg Newman]

I'll have to check out DiredPlus. And I just saw that there is PyMacs for crossing between emacs lisp and python. (Pymacs framework

11.17.2008 18:10

eNavigation 2008 (formerly AIS) conference starts tomorrow

eNavigation 2008 starts tomorrow

in Seattle. There will be a big push to get AIS related stuff

figured out this week.

11.16.2008 07:34

How do I use matplotlib to make same scale graphs?

I wrote a program to walk through

some data and make one png plot per item with matplotlib. This is

pretty great, but how do I make the axes all the same range if the

min and max values are often different? In gnuplot, I use something

like this:

So lazyweb, what's the answer?

set xrange [0:1000] set yrange [0:260]With matplotlib in python, I was trying this, but it doesn't control the axes.

import matplotlib.pyplot as plt

plt.ioff()

in_file = 'somedata.dat'

somedata = data_class(in_file)

for item_num,item in enumerate(some_data):

out_filename = ('%s-%03d.png' % (in_file[:-4],item_num))

print out_filename

p = plt.subplot(111)

p.set_xlabel('sample number')

p.set_ylabel('value')

p.set_title('File: %s Item num: %d' % (in_file[:-3], item_num))

p.set_ylim(0,260)

p.plot(item)

#p.draw()

plt.savefig(out_filename) #,'png') # Freaks out if I remind it that I want png

plt.close()

With great power comes great complexity and pain. Gnuplot is easy,

but I often push past its capabilities.So lazyweb, what's the answer?

11.14.2008 16:38

AIS on the Healy

Phil just pointed me to this in his

RVTEC meeting notes: The

UNOLS Research Vessel Technical Enhancement Committee (RVTEC)

Meeting

30. AIS use on HEALY, D. Chayes: Used $250 AIS to track LSSL (Louis S. St Laurent) position. ... Used Kurt Schwehr's Python encoding off his webpage.

11.14.2008 16:16

MA bridge allision

Of local interest...

Barge collides with Amesbury Swing Bridge [coastguardnews.com]

The Coast Guard is investigating what caused a 250-foot barge to strike the Amesbury Swing Bridge in the Merrimack River around 12 p.m. today. Coast Guard Sector Boston received a call at approximately 11: 50 a.m., reporting the barge William Breckenridge hit the Swing Bridge, which connects Newburyport to Amesbury, as it transited through the channel near Deer Island. No injuries or pollution have been reported. The Massachusetts State Highway Department is inspecting the extent of the damage to the bridge. ...

11.14.2008 12:09

spidering a disk

I've been thinking about how to best

spider a disk. In python, there is the os.path command. Combining

that with the file python interface, we get the beginnings of a

spider utility.

#!/usr/bin/env python

import sys

import os.path

import magic

ms = magic.open(magic.MAGIC_NONE)

ms.load()

def visit(arg, dirname, names):

print ' ',dirname

for name in names:

file_type = ms.file(dirname+'/'+name)

print ' ',name,file_type

os.path.walk('.', visit, 'arg')

This is similar to this shell command:

% find . | xargs fileThe above spider.py outputs something like this (but won't yet work on windows due to '/'):

% spider.py

== . ==

acinclude.m4 ASCII M4 macro language pre-processor text

aclocal.m4 ASCII English text, with very long lines

AUTHORS ASCII text, with no line terminators

ChangeLog ISO-8859 English text

config.guess POSIX shell script text executable

== ./doc ==

file.man troff or preprocessor input text

libmagic.man troff or preprocessor input text

magic.man troff or preprocessor input text

Makefile.am ASCII text

Makefile.in ASCII English text

== ./magic ==

Header magic text file for file(1) cmd

Localstuff ASCII text

Magdir directory

Makefile.am ASCII English text

Makefile.in ASCII English text

== ./magic/Magdir ==

acorn ASCII English text

adi ASCII text

adventure ASCII English text

allegro ASCII text

alliant ASCII English text

== ./python ==

example.py ASCII Java program text

Makefile.am ASCII text

Makefile.in ASCII English text

py_magic.c ASCII C program text

py_magic.h ASCII C program text

11.14.2008 10:19

Mac networking issue?

Last night, I tried to connect my mac

laptop to a Linksys wireless router. I've connected to this router

many times before without trouble. However, last night, it

connected, but nothing worked. When I looked at the network

settings, I saw that I had a self-assigned IP address. I could ping

the router, but was otherwise out of luck. I tried connecting via

wired ethernet with the same result. I found in my syslog something

like this:

Nov 13 19:47:52 CatBoxIII mDNSResponder[21]: Note: Frequent transitions for interface en0 (169.254.237.105); network traffic reduction measures in effectWhat? Now, back at the office, things are working just fine both wireless and wired. What gives? This is an up-to-date intel mac runing 10.5.5. Is there a bug in the mac dhcp handler? Another computer was using this router just fine.

11.13.2008 15:22

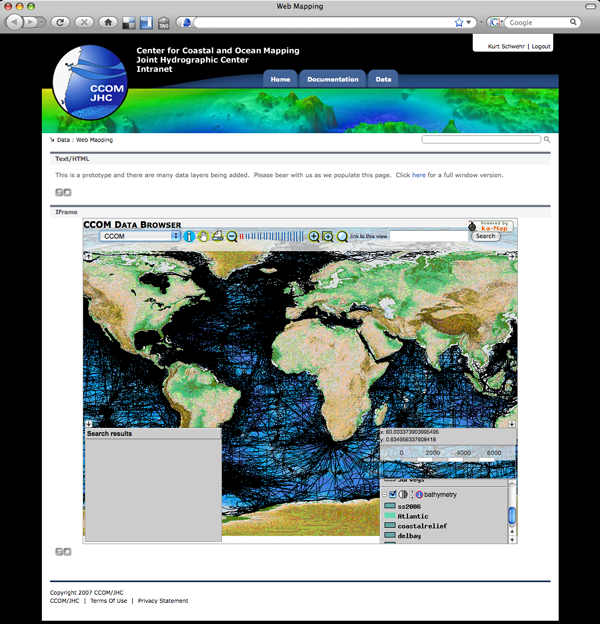

ka-Map and GeoMedia

Mashkoor sent out an email today

asking for people to get their data submitted to NGDC. He sent out

the link again to our intranet browswer based web mapping system

that Jim Case had done. It is driven by Intergraph's GeoMedia [wikipedia],

ka-Map, and a big Oracle

database. Here is a quick screenshot of the tool as it exists

presently:

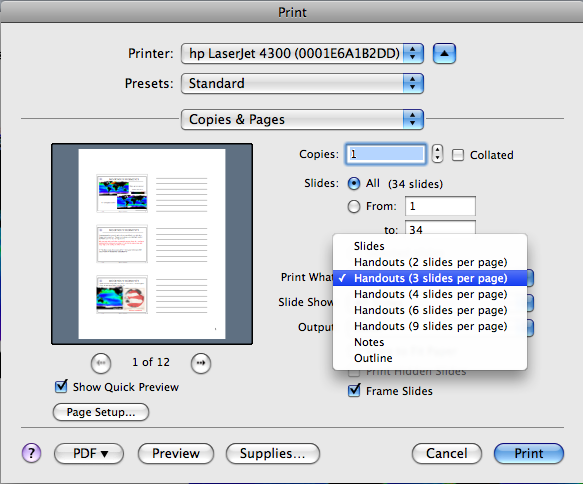

11.13.2008 09:35

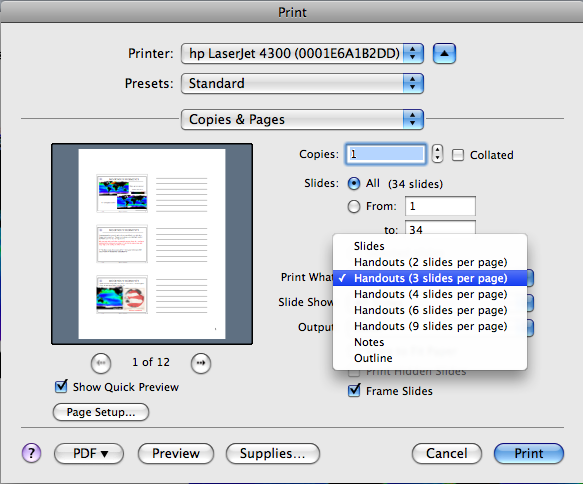

PowerPoint handout printing mode

I didn't know until today that this

is how everybody around me has been turning their powerpoints into

handouts to take notes on.

11.13.2008 09:05

Scripting seminar

Yesterday, I gave the CCOM student

seminar on scripting. I mostly focused on python and there were a

lot of good questions and comments during the seminar.

20081112-Scripting

20081112-Scripting

11.13.2008 09:03

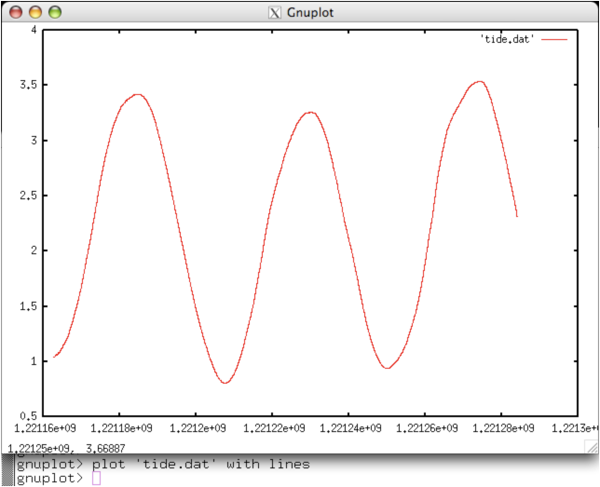

Tide data for tappy

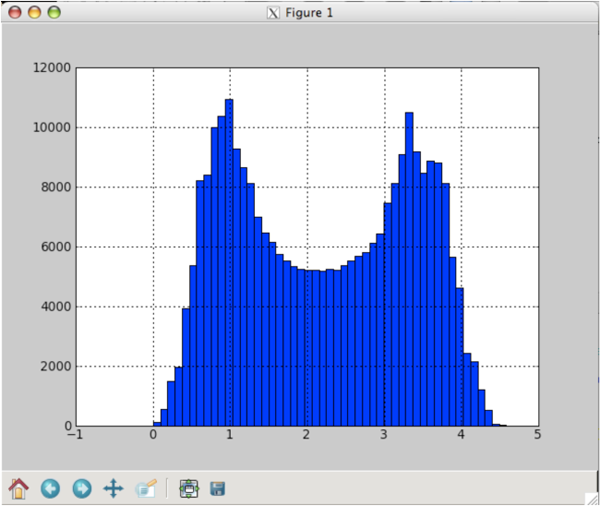

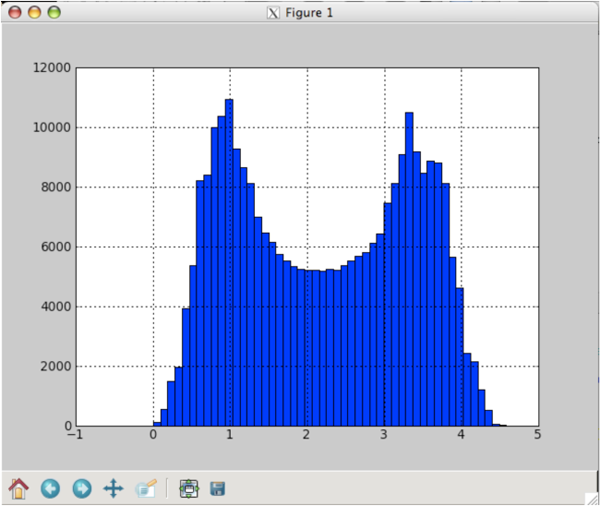

Here is the tide data that I used for

tappy. Small section of waterlevels after converting to metric:

Here is a histogram done in matplotlib:

Also, here is the sparse.def file that tappy.py uses for my data:

Here is a histogram done in matplotlib:

#!/usr/bin/env python2.5

import matplotlib.pyplot as plt

import matplotlib.mlab as mlab

waterlevels = mlab.load('tide-all-2.dat')

n, bins, patches = plt.hist(waterlevels, 50)

plt.grid(True)

plt.show()

Also, here is the sparse.def file that tappy.py uses for my data:

parse = [

positive_integer('year'),

positive_integer('month'),

positive_integer('day'),

positive_integer('hour'),

positive_integer('minute'),

positive_integer('second'),

real('water_level'),

]

11.13.2008 07:18

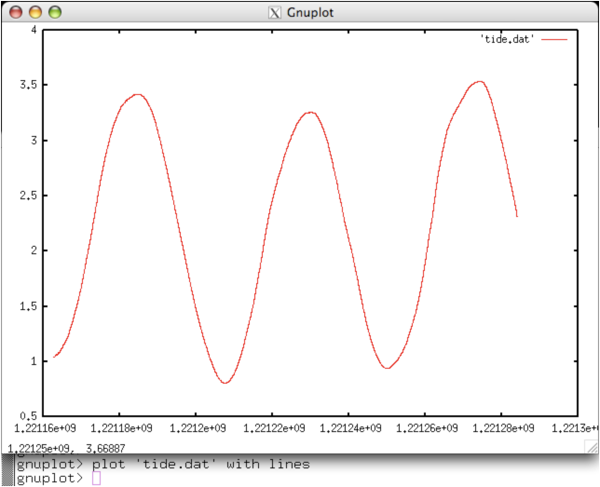

tappy - tidal analysis in python

Yesterday, I tried tappy. The program

crashed and I sent Tim, the author, a crash report. Last night, he

put a new version up on source forge and it seems to work! I need

to look at the output to see if it makes sense, but here is the

process so far.

% head tide2.raw # START LOGGING UTC seconds since the epoch: 1221162698.25 # SPEED: 9600 # PORT: /dev/ttyS0 # TIMEOUT: 300.0 # STATIONID: memma # DAEMON MODE: True 635 222 497,rmemma,1221162708.95 635 222 497,rmemma,1221162721.07 635 222 497,rmemma,1221162733.2 635 223 497,rmemma,1221162745.32Then I converted the engineering units to meters above the sensor.

#!/usr/bin/env python

import datetime

# Coefficients for water level in meters

A=-1.008E-01

B=5.125E-03

C=7.402E-08

D=0.

out = file('tide-tappy.dat','w')

for line in file('tide2.raw'):

try:

fields = line.split()

station_id = int(fields[0])

waterlevel_raw = int(fields[1])

temp_c, station_name, timestamp = fields[2].split(',')

temp_c = int(temp_c)

timestamp = float(timestamp)

N = waterlevel_raw

waterlevel = ( A + B*N + C*(N**2) + D*(N**3) )

except:

print 'bad data:',line

continue

time_str = datetime.datetime.utcfromtimestamp(timestamp).strftime("%Y %m %d %H %M %S")

out.write('%s %s\n' % (time_str,waterlevel))

The metric result was this:

% head tide-tappy.dat 2008 09 11 19 51 48 1.04059800168 2008 09 11 19 52 01 1.04059800168 2008 09 11 19 52 13 1.04059800168 2008 09 11 19 52 25 1.04575594058 2008 09 11 19 52 37 1.04575594058 2008 09 11 19 52 49 1.04575594058 2008 09 11 19 53 01 1.04575594058 2008 09 11 19 53 13 1.04575594058 2008 09 11 19 53 25 1.04575594058 2008 09 11 19 53 38 1.04575594058Note that this data is going to be non-zero mean and all the data is way above 0. I don't know if that will cause problems with the tidal analysis. After clipping out a large continuous chunk of data, I still had 247K water levels. That will take a long time, so I ran my decimate script to get it down to 22K samples:

decimate -l 10 tide-tappy-short.dat > tide-tappy-short2.datNow that I have a reasonably sized data set so it will run faster, here are the results:

% tappy.py tide-tappy-short2.dat

# NAME SPEED H PHASE

# ==== ===== = =====

Mm 0.54437626 0.1129 41.9029

MSf 1.01589577 0.0358 287.9779

2Q1 12.85428307 0.0115 188.2313

Q1 13.39865933 0.0248 200.5201

O1 13.94303559 0.1089 187.5583

NO1 14.49669238 0.0201 93.0352

K1 15.04106864 0.1053 173.8819

J1 15.58544490 0.0046 165.1417

OO1 16.13910169 0.0065 316.7976

ups1 16.68347795 0.0056 214.2090

MNS2 27.42383220 0.0128 164.7614

mu2 27.96820846 0.0873 291.0659

N2 28.43972797 0.2989 78.1917

M2 28.98410423 1.5892 105.8615

L2 29.52848049 0.0857 119.4599

S2 30.00000000 0.2055 129.5660

eta2 30.62651354 0.0193 21.9170

2SM2 31.01589577 0.0032 315.2719

MO3 42.92713982 0.0056 240.8188

M3 43.47615634 0.0011 145.7261

MK3 44.02517287 0.0039 147.9154

SK3 45.04106864 0.0031 8.1197

MN4 57.42383220 0.0186 26.3005

M4 57.96820846 0.0210 73.1954

SN4 58.43972797 0.0097 275.5858

MS4 58.98410423 0.0075 68.4415

S4 60.00000000 0.0011 315.0172

2MN6 86.40793643 0.0169 117.4640

M6 86.95231269 0.0407 124.8344

2MS6 87.96820846 0.0234 164.1220

2SM6 88.98410423 0.0075 256.1520

S6 90.00000000 0.0026 304.1771

M8 115.93641692 0.0068 174.5350

# INFERRED CONSTITUENTS

# NAME SPEED H PHASE

# ==== ===== = =====

rho1 13.47151608 0.0041 58.4877

M1 14.49205211 0.0077 248.6799

P1 14.95893136 0.0349 260.5434

2N2 27.89535171 0.0413 76.3025

nu2 28.51258472 0.0604 175.2170

lambda2 29.45562374 0.0111 331.9892

T2 29.95893332 0.0121 101.4720

R2 30.04106668 0.0016 110.5621

K2 30.08213728 0.0559 63.0246

# AVERAGE (Z0) = 2.21195756363

I now need to plot this up agains the data to see how it did. I

might need to add some flags to tappy, but I need more time to give

it a look.11.12.2008 13:32

European LRIT data center

Moving Closer

to an EU LRIT Data Centre [emsa.europa.eu]

press_release_07-11-2008.pdf

Today, 6 November 2008, at the European Maritime Safety Agency (EMSA) headquarters in Lisbon contracts have been signed for the development of the European Union Long Range Identification and Tracking Data Centre. This step marks the end of a European public procurement process started with the tender publication on 11 June 2008. Willem de Ruiter, EMSA Executive Director, Christophe Vassal, CEO of CLS (Collecte Localisation Satellites) based in Ramonville, France and Isabelle Roussin, Executive Vice President of Marketing for Highdeal S.A., based in Paris, France met to underline their dedication to setting-up a Data Centre for the EU Member States as early as possible in 2009. Mr. de Ruiter expressed his confidence in the two companies to set up a robust system for the collection and distribution of long range ship position reports. Mr. de Ruiter recalled that it was only on 2 October 2007 that the task of providing an EU LRIT Data Centre was given to the Agency by the European Transport Ministers. Substantial work has been carried out by a dedicated task force since this date. He stated: "With the professional experience of these companies (CLS and Highdeal) I trust that a good European LRIT system will soon be available to serve over 10,000 vessels flying the flag of EU Member States and interested Overseas Territories." ...

11.12.2008 10:12

pyproj example

I don't think I've ever posted a

pyproj example in my blog, so here is a quick one:

python

>>> from pyproj import Proj

>>> params={'proj':'utm','zone':19}

>>> proj = Proj(params)

>>> proj(-70.2052,42.0822)

(400316.0002622169, 4659605.5586070241)

proj(400316.0002622169, 4659605.5586070241,inverse=True)

11.12.2008 10:01

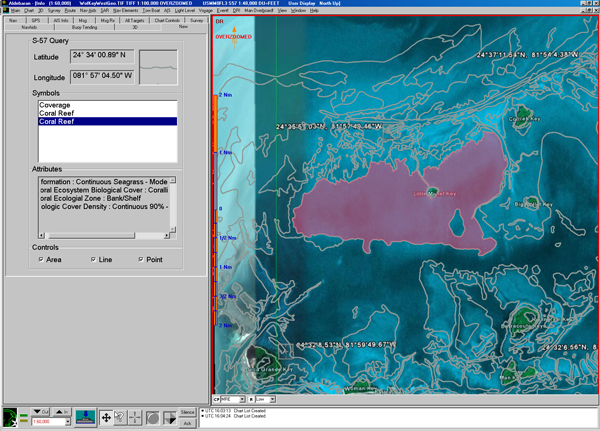

Marine Information Objects/Overlays for Marine Protected Areas

ICAN and CARIS have been working with

NOAA on Marine Information Objects/Overlays (MIO) of Marine

Protected Areas (MPA). I don't know the full details of the

project, but I just got permission to share some screen shots from

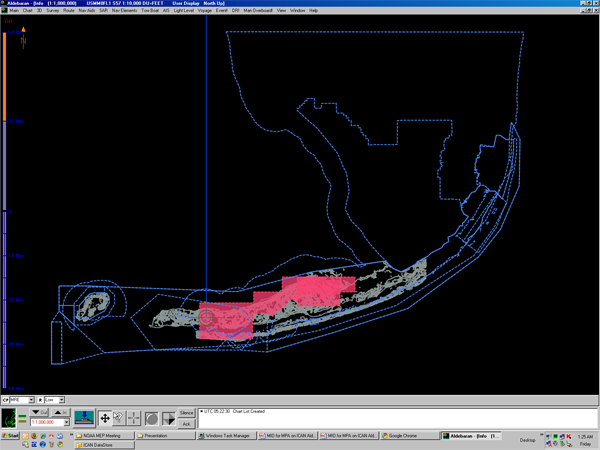

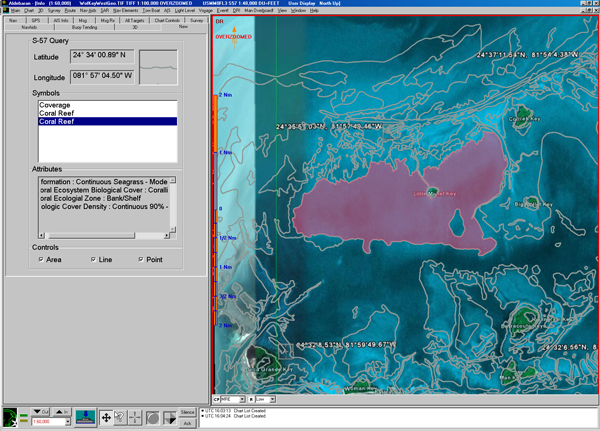

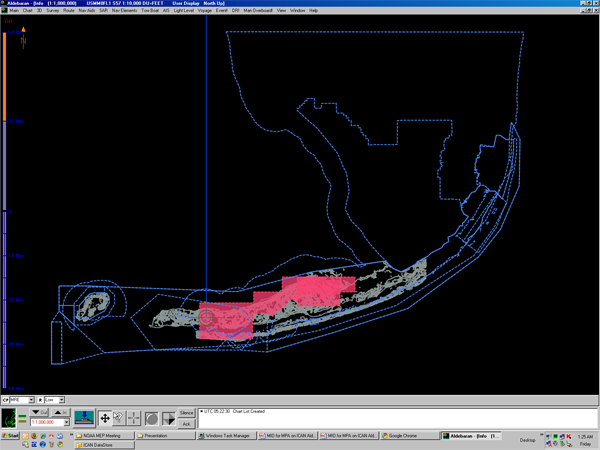

Aldebaran II. I belive both of these are off of Florida.

11.12.2008 04:09

GIS Day at UNH Today

Today is GIS Day at UNH. Not quite the same

day as the normal GIS

Day

11.11.2008 16:53

Right whale AIS Project - PVA demonstration

Yesterday, Dave Wiley, Dudley Baker,

and I gave an invited talk at the Passenger Vessel

Association's Original Colonies Region Meeting in Portsmouth.

Nice group and some good questions. Expect to see an article on

Right Whales next month in their FogHorn magazine. (Membership

required)

I was down a power supply, so the demo yesterday featured some new code hot of the keyboard. I added synthetic AIVDM NMEA string generation from my code so that my laptop was generating the AIS Binary Broadcast Message receive message as if it were an AIS receiver. It then sent that out the serial port from my Mac to Dudley's PC running Regulus II. The ECS software can't tell the difference, so the audience could see the whale zone messages populating one by one as my code sent them. I have a couple second delay between the 10 messages for dramatic effect. I used this channel to also give near realtime AIS targets for the SBNMS. I recorded 15 minutes of AIS messages from N-AIS and played it back over the serial port. The only special hardware involved was a serial NULL modem.

I was down a power supply, so the demo yesterday featured some new code hot of the keyboard. I added synthetic AIVDM NMEA string generation from my code so that my laptop was generating the AIS Binary Broadcast Message receive message as if it were an AIS receiver. It then sent that out the serial port from my Mac to Dudley's PC running Regulus II. The ECS software can't tell the difference, so the audience could see the whale zone messages populating one by one as my code sent them. I have a couple second delay between the 10 messages for dramatic effect. I used this channel to also give near realtime AIS targets for the SBNMS. I recorded 15 minutes of AIS messages from N-AIS and played it back over the serial port. The only special hardware involved was a serial NULL modem.

11.11.2008 16:42

Winter has come to the SeaCoast area

We were over in Portsmouth this

morning and winter has definitely come to the area.

11.11.2008 06:56

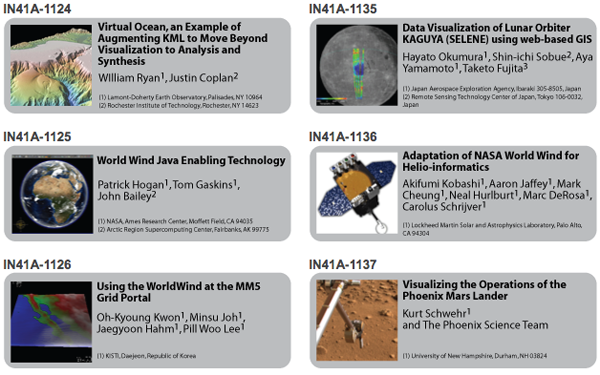

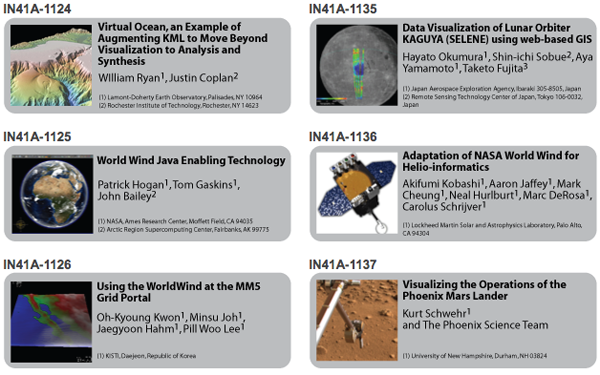

AGU Virtual Globes session

Update 18-Nov-2008: Google Oceans

will not be out during 2008.

A quick aside: I hear that Google Oceans has been delayed to Dec 9th. Google also has Introducing Google.org Geo Challenge Grants

The web page is now up: Virtual Globes at AGU 2008

Visualizing the Operations of the Phoenix Mars Lander

A quick aside: I hear that Google Oceans has been delayed to Dec 9th. Google also has Introducing Google.org Geo Challenge Grants

The web page is now up: Virtual Globes at AGU 2008

Visualizing the Operations of the Phoenix Mars Lander

11.10.2008 19:03

Phx end of mission

Mars

Phoenix Returns to Ashes [wired] with interactive

timelines.

Mars Phoenix Lander Finishes Successful Work On Red Planet [LPL]

Requiem for a Robot: Mars Probe Dies [abcnews]

Phoenix Mars Lander Declared Dead [slashdot]

JPL has a tribute video: Phoenix - A Tribute and Phoenix

Mars Phoenix Lander Finishes Successful Work On Red Planet [LPL]

November 10, 2008 -- NASA's Phoenix Mars Lander has ceased communications after operating for more than five months. As anticipated, seasonal decline in sunshine at the robot's arctic landing site is not providing enough sunlight for the solar arrays to collect the power necessary to charge batteries that operate the lander's instruments. Mission engineers last received a signal from the lander on Nov. 2. Phoenix, in addition to shorter daylight, has encountered a dustier sky, more clouds and colder temperatures as the northern Mars summer approaches autumn. The mission exceeded its planned operational life of three months to conduct and return science data. The project team will be listening carefully during the next few weeks to hear if Phoenix revives and phones home. However, engineers now believe that is unlikely because of the worsening weather conditions on Mars. While the spacecraft's work has ended, the analysis of data from the instruments is in its earliest stages. "Phoenix has given us some surprises, and I'm confident we will be pulling more gems from this trove of data for years to come," said Phoenix Principal Investigator Peter Smith of the University of Arizona in Tucson. ...From Andy M.: Mars Lander Succumbs to Winter [New York Times]

Requiem for a Robot: Mars Probe Dies [abcnews]

Phoenix Mars Lander Declared Dead [slashdot]

JPL has a tribute video: Phoenix - A Tribute and Phoenix

11.10.2008 18:00

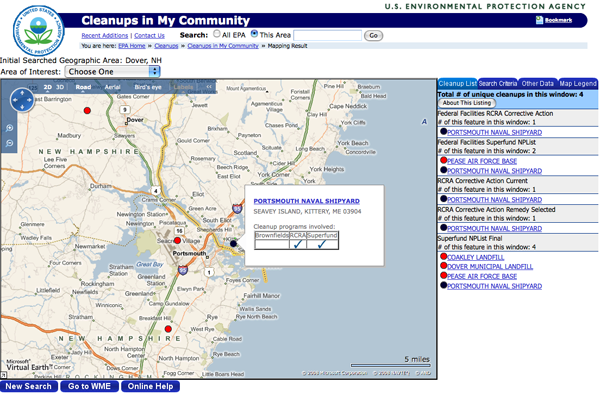

EPA uses MS Virtual Earth

Some concepts of the Environmental

Responce Management Application (ERMA) in here. We've met with the

EPA share ideas and it is exciting to see this kind of

application.

Microsoft Virtual Earth used by EPA for "Cleanups in My Community" web site [virtualearth4gov]

Microsoft Virtual Earth used by EPA for "Cleanups in My Community" web site [virtualearth4gov]

Accidents, spills, leaks, and past improper disposal and handling of hazardous materials and wastes have resulted in tens of thousands of sites across our country that have contaminated our land, water (groundwater and surface water), and air (indoor and outdoor). Some of the more common categories of contaminants include: industrial solvents, petroleum products, metals, pesticides, bacteria, and radiological materials. These contaminated sites can threaten human health as well as the environment, in addition to hampering economic growth and the vitality of local communities. EPA and its state and territorial partners have developed a variety of cleanup programs to assess and, where necessary, clean up these contaminated sites. Cleanups may be done by EPA, other federal agencies, states or municipalities, or the company or party responsible for the contamination. Click the following links for more cleanup-related information and resources. The EPA has expanded its use of the Virtual Earth mapping platform through its "Cleanups in My Community" web portal to provide a mapping and listing tool that shows sites where pollution is being or has been cleaned up throughout the United States. It maps, lists and provides cleanup progress profiles...

11.10.2008 17:41

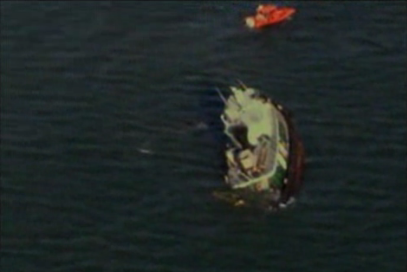

Buzzards Bay grounding

Just found out that I know someone

who was on this tug. Small world.

Boston based tug runs aground near Buzzards Bay [Coast Guard News]

Boston based tug runs aground near Buzzards Bay [Coast Guard News]

Coast Guard crews helped a Boston-based tug crew today after they grounded their vessel near Buzzards Bay, Mass., around 1 p.m. No injuries were reported of the three crewmembers aboard the tug Southern Cross. Coast Guard Sector Southeastern New England received a radio call from the tug reporting that the engine room was flooding and could not be controlled. The crew said they planned to beach the vessel to keep it from sinking. ...

11.09.2008 19:50

SWIG interface for GSF

I've been thinking about a python

interface for the SAIC Generic Sensor Format (GSF) for sensor data.

If I use SWIG, then it can support

other languages in addition to Python (e.g. Perl). However, it

turns out to create a very awkward interace. I was hoping that it

would be easy to do a quick swig run and that I could write a

Python class around it, but I am wondering if that is worth my

time. Roland has taken a liking to boost, but this is straight C

and I think that ctypes would work nicely (and keep me in working

python as much as possible). Can SWIG include docstrings?

Here is what I've got on the SWIG front. I probably won't put more into it, but feel free to take it farther if you want. My first try was to let swig do all the work. In the make file, I had to add a CFLAG:

Here is what I've got on the SWIG front. I probably won't put more into it, but feel free to take it farther if you want. My first try was to let swig do all the work. In the make file, I had to add a CFLAG:

CFLAGS += ${shell python-config --includes} -std=c99

Then I build the library like this:

% swig -python -module gsf gsf.h # Produces gsf_wrap and gsf.py

% perl -pi -e 's|\#define SWIGPYTHON|\#include "gsf.h"\n\#define SWIGPYTHON|' gsf_wrap.c

% gcc-4 -O3 -funroll-loops -fexpensive-optimizations -DNDEBUG -ffast-math \

-Wall -Wimplicit -W -pedantic -Wredundant-decls -Wimplicit-int \

-Wimplicit-function-declaration -Wnested-externs -march=core2

-I/sw/include/python2.6 -I/sw/include/python2.6 -std=c99

-c -o gsf_wrap.o gsf_wrap.c

% gcc-4 -L/sw/lib -bundle -undefined dynamic_lookup libgsf.a gsf_wrap.o -o _gsf.so

% ipython

In [1]: import gsf

I was then unable to use gsf.gsfOpen. The prototype for

gsfOpen shows that it returns the handle the memory pointed to by

handle.

int gsfOpen(const char *filename, const int mode, int *handle);I then read SWIG:Examples:python:pointer [os.apple.com]. I realized that I have to create a swig interface file to go this route. In the examples, they have a typemap to specify which pointers are inputs and which are outputs.

// -*- c -*-

%module gsf

//

#define GSF_CREATE 1

#define GSF_READONLY 2

#define GSF_UPDATE 3

#define GSF_READONLY_INDEX 4

#define GSF_UPDATE_INDEX 5

#define GSF_APPEND 6

//

//%{

//int gsfOpen(const char *filename, const int mode, int *handle);

//%}

//

%include typemaps.i

int gsfOpen(const char *INPUT, const int INPUT, int* OUTPUT);

Now I run swig on the .i interface definition to build the

gsf_wrap.c file:

% swig -python gsf.Then recompile as above and build the .so shared object. Here is running what I have so far:

% ipython

In [1]: import gsf

In [2]: status,fd = gsf.gsfOpen('C1101635.gsf',gsf.GSF_READONLY)

In [3]: print status,fd

(0, 1)

What I really want is something like this:

import mb.gsf

new_line = gsf('C1101635-v2.gsf',gsf.CREATE) # Note that I got ride of GSF_ from GSF_CREATE

for ping in gsf('C1101635.gsf'): # Note that GSF_READONLY is the default

# plot ping

new_ping = ping.dup() # or gsf.ping(template=ping) or gsf.ping(ping) to copy all the nav in a ping?

for beam_num,beam in enumerate(ping):

# Apply some function to the beam

new_ping[beam_num] = some_transform(beam)

new_line.close()

Or something like that. BTW, I had trouble finding GSF lines while

not at CCOM. Thanks to Dave C. for this Reson 8101 survey off of

Alaska:

H10906 [NGDC] and I was looking at this file:

C1101635.gsf. Hmm... try googling for H10906 multibeam.

Or search for H10906 site:noaa.gov - these sites are really

not setup well for searching. I tried to find the metadata.11.09.2008 17:04

Slashdot on emacs tricks

(Stupid)

Useful Emacs Tricks? [Ask Slashdot] First, try these:

M-x tetris M-x doctor M-x yow M-x phases-of-moon M-x hanoi M-x spookAnd for your .emacs file:

;; remote compile support

(setq remote-compile-host "hostname")

(setq remote-shell-program "/usr/bin/ssh")

(setq default-directory "/home/username/compileDir")

;; remote edit of files:

(require 'tramp)

(setq tramp-default-method "scp")

;in one's .emacs file. Then open remote files with:

;/username@host:remote_path

;/ssh:example.com:/Users/someone/.emacs

;C-x C-f /sudo::/path/to/file, or su: C-x C-f /root@localhost:/path/to/file.

;

(setq inhibit-startup-message t)

(menu-bar-mode -1)

; Put backup files not in my directories

(setq backup-directory-alist `(("." . ,(expand-file-name "~/.emacs-backup"))))

;

(global-set-key "\C-x\C-e" 'compile)

(global-set-key "\C-x\C-g" 'goto-line)

;; setup function keys

(global-set-key [f1] 'compile)

(global-set-key [f2] 'next-error)

(global-set-key [f3] 'manual-entry)

;

(display-time)

(setq compile-command "make ")

(fset 'yes-or-no-p 'y-or-n-p) ; stop forcing me to spell out "yes"

and

- pdb-mode - Python debugger mode for emacs.

- http://ess.r-project.org/- Emacs Speaks statistics - (R)

- http://www.emacswiki.org/emacs/EmacsNiftyTricks

11.09.2008 16:54

Canadian AUV's for UNCLOS

Kind of a surprising use of an AUV.

Launch and recovery in the ice could prove challenging.

Unmanned robot subs key to Canada's claim on Arctic riches

[canada.com]

The Canadian government has commissioned a pair of miniature submarines - torpedo-shaped, robotic submersibles - to probe two contentious underwater mountain chains in the Arctic Ocean, part of the country's quest to secure sovereignty and potential oil riches in a Europe-sized swath of the polar seabed. The twin Autonomous Underwater Vehicles are being built by Vancouver-based International Submarine Engineering Ltd. in a $4-million deal with Natural Resources Canada, Defence Research and Development Canada and other federal agencies. ... But the bright yellow, six-metre-long, 1,800-kilogram submersibles - being designed to cruise a long, pre-programmed course above the Arctic's underwater mountains - would allow Canadian scientists to gain more detailed information about the geology of the polar seabed. Jacob Verhoef, the chief federal scientist responsible for Canada's Arctic mapping mission, said Friday that the AUVs being built will make it much easier to conduct seabed surveying in the sometimes harsh polar conditions that can buffet ships, ground helicopters and create long delays in data collection. ...

11.07.2008 09:37

UDel CSHEL visit

Yesterday I visited the UDel Coastal Sediments,

Hydrodynamic, and Engineering Lab (CSHEL). It was great to see

what Art Trembanis and his students have been up to. I had a blast

giving a talk for the Geology of Coasts class and a

seminar for the deptartment of Geological Sciences.

11.06.2008 08:06

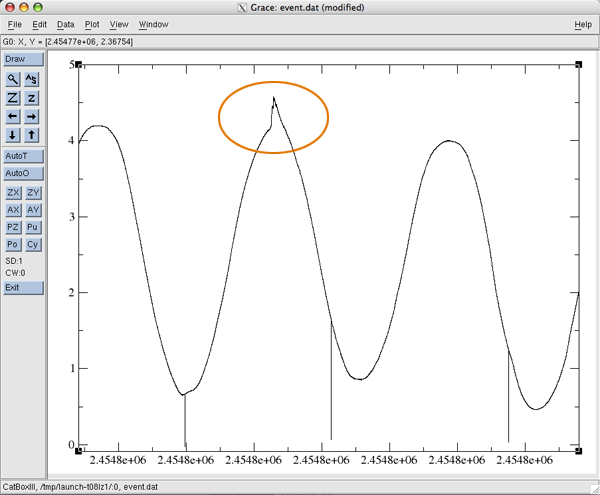

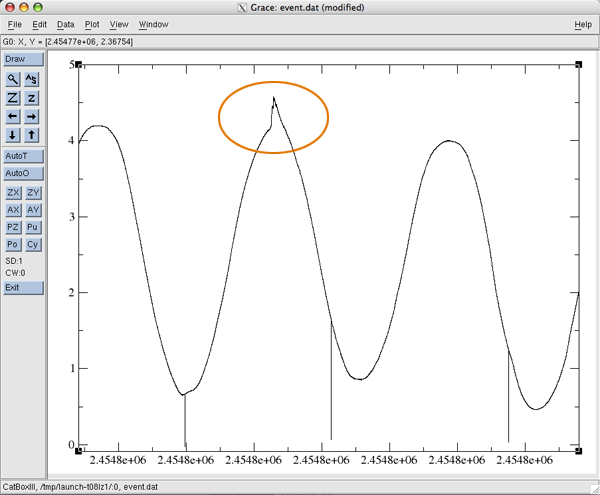

grace for data exploration

I've been trying to find a good data

exploration tool for 2D plots. There are lots of options and I just

tried another one that Jeff Gee introduced me to back in 2002 or

so: Grace.

I've got data that is time based. It's a waterlevel pressure sensor that logs the time and water level in UNIX UTC seconds. In python I convert the timestamps to be ISO 8601 dates for Grace:

I've got data that is time based. It's a waterlevel pressure sensor that logs the time and water level in UNIX UTC seconds. In python I convert the timestamps to be ISO 8601 dates for Grace:

a_datetime = datetime.datetime.utcfromtimestamp(timestamp)

a_datetime.strftime('%Y-%m-%dT%H:%M:%S') # ISO 8601

Running grace in the GUI mode like this brings up the data:

% fink install grace % xmgrace event.datI then see this. I am not sure how to change the x-axis into something friendlier. I added the circle in photoshop to highlight the data that I am trying to explore.

11.06.2008 06:26

Arriving in Delaware

I've never been to Delaware before.

Last night I got to hang out with Art and two of his

students.

11.05.2008 10:39

MIT Odyssey IV AUV visits the UNH Chase tank

Today CCOM has a visitor being

testing in our big tank:

11.04.2008 17:40

python2.6 and fink - ipython

We have started working on getting

python 2.6 setup in fink. Things are going pretty well, but there

are some funky things. For ipython, fink is back at 0.8.2 (the

latest is 0.9.1). Looks like we need to get some things updated.

% ipython /sw/lib/python2.6/site-packages/IPython/Extensions/path.py:32: DeprecationWarning: the md5 module is deprecated; use hashlib instead import sys, warnings, os, fnmatch, glob, shutil, codecs, md5 /sw/lib/python2.6/site-packages/IPython/iplib.py:58: DeprecationWarning: the sets module is deprecated from sets import Set Python 2.6 (r26:66714, Nov 2 2008, 16:14:23) Type "copyright", "credits" or "license" for more information. IPython 0.8.2 -- An enhanced Interactive Python. In [1]:

11.04.2008 06:11

Time lapse video of sampling a gravity core

I'm planning to show this video in

class today for "Non-Biogenous Sediments," so I figure it was time

to put it in YouTube. I am collecting paleomag cubes for sediment

fabric analysis using Anisotropy of Magnetic Susceptibility (AMS).

This is from an Apple iSight camera on a firewire cable using a

bash script to trigger iSightCapture about 1 time a second. Overall

it takes a couple hours to process one section of a core. The video

here represents something like 1-2 hours. Someone asked me what is

the procedure for processing a core. I responded with a series of

these time lapse videos that are hidden in the subfolders

here:

Gaviota/bpsio-Aug04/core-sampling/

Sampling an ocean core - time lapse of lab procedure [youtube]

I originally posted about this here: Core 1 sample photos and movie, but those links are broken. The original post had no images. The grabframes script to capture images currently lives here: scripts [vislab-ccom/~schwehr]

Gaviota/bpsio-Aug04/core-sampling/

Sampling an ocean core - time lapse of lab procedure [youtube]

I originally posted about this here: Core 1 sample photos and movie, but those links are broken. The original post had no images. The grabframes script to capture images currently lives here: scripts [vislab-ccom/~schwehr]

11.04.2008 04:47

More python gems/tricks/tips

I was reading Eric Flornzano's

trigger article and decided to take a look at his non-django

posts:

Gems of Python [Eric Florenzano]

Here is enumerate:

Gems of Python [Eric Florenzano]

- filter

- itertools.chain

- setdefault

- collections.defaultdict

- zip

- title

- ulrparse.urlparse

- inspect.getargspec(somefunction)

- Generators

- re.DEBUG

- enumerate ... I do i+=1 all the time. Time to break the habit

Here is enumerate:

#!/usr/bin/env python

l = ['zero','one','two','three']

for count,number_str in enumerate(l):

print count,'...',number_str

When run:

% ./enum.py 0 ... zero 1 ... one 2 ... two 3 ... threeStarting from an artibrary number (e.g. 1) is a bit less readable:

#!/usr/bin/env python

from itertools import izip, count

a_list = ['one','two','three','four']

start=1

for i,number_str in izip(count(start), a_list):

print i,'...',number_str

The result:

% ./enum2.py 1 ... one 2 ... two 3 ... three 4 ... fourMore stuff I need to add to my giant template file.

11.03.2008 17:22

AIS Class B alert from the USCG

The AIS Notices

page at the USCG Navigation Center has announced an alert for

AIS Class B:

New Automatic Identification System (AIS) devices may not be visible on older AIS units [pdf]

Make sure to check out the list of devices that need updating: Safety_Alert_10-08_Class_A_Listing.pdf

There is also a notice on the same page about the testing going on in Tampa, FL of the met-hydro test message. This is a follow-on to the demonstration last

New Automatic Identification System (AIS) devices may not be visible on older AIS units [pdf]

Make sure to check out the list of devices that need updating: Safety_Alert_10-08_Class_A_Listing.pdf

NEW AUTOMATIC IDENTIFICATION SYSTEM (AIS) DEVICES MAY NOT BE DISCERNIBLE WITH OLDER AIS SOFTWARE The U.S. Coast Guard is pleased to announce the availability of type-approved Automatic Identification System (AIS) Class B devices. These lower cost AIS devices are interoperable with AIS Class A devices and make use of expanded AIS messaging capabilities. Unfortunately, not all existing Class A devices are able to take full advantage of these new messaging capabilities. All existing AIS stations will be able to receive and process these new messages from a Class B device. However, they may not be able to display all Class B information on their Minimum Keyboard & Display (MKD) or other onboard navigation systems. In most cases, a software update or patch will be required to do so. Therefore, the U.S. Coast Guard cautions new AIS Class B users to not assume that they are being 'seen' by all other AIS users or that all their information is available to all AIS users. Further, the U.S. Coast Guard strongly recommends that all users of out-dated AIS software update their systems as soon as practicable. The new Class B devices have the same ability to acquire and display targets not visible to radar (around the bend, in sea clutter, or during foul weather). They differ slightly in their features and nature of design, which reduces their cost and affects their performance. They report at a fixed rate (30 seconds) vice the Class A's variable rate (between 2-10 seconds dependent on speed and course change). They consume less power, thus broadcast at lower strength (2 watts versus 12 watts), which impacts their broadcast range; but, they broadcast and receive virtually the same vessel identification and other information as Class A devices, however, do so via different AIS messages. Class A devices by design will receive the newer Class B AIS messages and their MKDs should display a Class B vessel's dynamic data (i.e. MMSI, position, course and speed), unfortunately, there are a few older models that do not. Although these older devices might not display the new AIS messages, they are designed-and tested-to receive and process these messages and make them available to external devices (e.g. electronic chart systems, chart plotters, radar) via a Class A output port. These external devices may also require updating in order to discern Class B equipped vessels. AIS automatically broadcasts dynamic, static, and voyage-related vessel information that is received by other AIS-equipped stations. In ship-to-ship mode, AIS provides essential information that is not otherwise readily available to other vessels, such as name, position, course, and speed. In the shipto- shore mode, AIS allows for the efficient exchange of information that previously was only available via voice communications with Vessel Traffic Services. In either mode, AIS enhances a user's situational awareness, makes possible the accurate exchange of navigational information, mitigates the risk of collision through reliable passing arrangements, facilitates vessel traffic management while simultaneously reducing voice radiotelephone transmissions, and enhances maritime domain awareness. The U.S. Coast Guard encourages its widest use. ...

There is also a notice on the same page about the testing going on in Tampa, FL of the met-hydro test message. This is a follow-on to the demonstration last

Commencing 11 September 2008, the Tampa Bay Cooperative Vessel Traffic Service began broadcasting Automatic Identification System (AIS) test messages to select test participants in the area via standard AIS channels. These broadcasts-originating from MMSI 003660471-are less than 1/2 second in duration, and, should not impact other AIS users in the area. However, should they, please notify us via our AIS Problem Report or by calling our Navigation Information Service (NIS) watchstander at (703) 313-5900. This is the first phase of a Coast Guard Research & Development Center project to develop, design and evaluate the most efficient means by which mariners can receive critical real-time navigation safety information through the use of AIS and its binary messaging capability. The first phase of the project will directly access Physical Oceanographic Real Time System (PORTS) quality checked data from National Oceanographic and Atmospheric Agency (NOAA) servers, and, repackage it for AIS transmission. These broadcasts will commence at 1100 on 11 September 2008 and every 6th minute thereafter, i.e. 11:06:00 a.m., 11:12:00 a.m., etc. ...

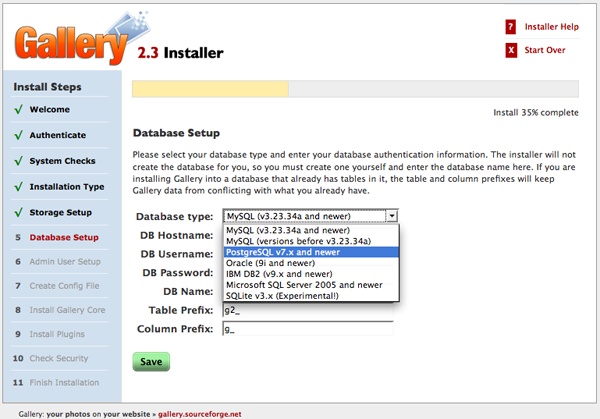

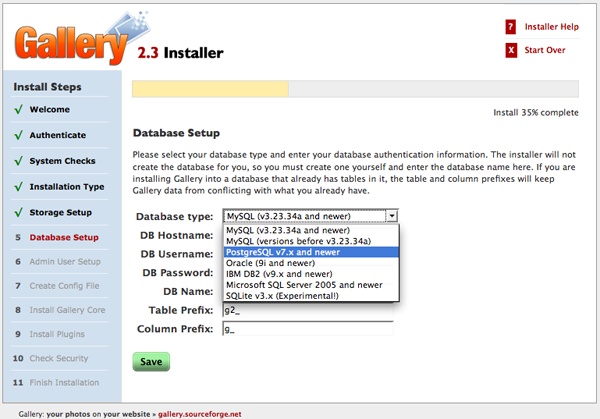

11.03.2008 16:20

Gallery2 and django

I'd like to see something like

Gallery2 in Django. I've

been using a moderate sized PHP at NASA that is built around

Gallery2 and have been impressed. I figured the best thing to do is

evaluate Gallery2 on my laptop and see if I could get Django to

pick up the database model via introspection. First to install

Gallery2. I got the "full" English only version. This is on a mac

with fink libapache2-mod-php5.

I stopped when I got the Step 9 that wants to install all the modules. At this point, the "Core" module is installed and I want to check out the database.

As an aside, you can also just directly look at the database tables. For Postgresql:

% createdb gallery2 -E UNICODE # Setup Postgresql database % createuser -l -P gallery2 # Make a database user for Gallery2 % wget http://downloads.sourceforge.net/gallery/gallery-2.3-full-en.tar.gz % cd /sw/var/www % tar xf ~/Desktop/allery-2.3-full-en.tar.gz % open http://localhost/gallery2/installThen I started walking through the install. The only thing a bit custom is that I put the data directory in /sw/var/g2data so that it is not accessable for viewing as web pages. I selected Postgres:

I stopped when I got the Step 9 that wants to install all the modules. At this point, the "Core" module is installed and I want to check out the database.

% mkdir ~/Desktop/djangotest % cd ~/Desktop/djangotest % django-admin.py startproject g2 % cd g2 % python2.5 python2.5 manage.py inspectdb > model_tmp.pyThe last command will dump the database model that Django things goes with the database tables created by Gallery2:

from django.db import models

class G2Schema(models.Model):

g_name = models.CharField(max_length=128, primary_key=True)

g_major = models.IntegerField()

g_minor = models.IntegerField()

g_createsql = models.TextField()

g_pluginid = models.CharField(max_length=32)

g_type = models.CharField(max_length=32)

g_info = models.TextField()

class Meta:

db_table = u'g2_schema'

class G2Eventlogmap(models.Model):

g_id = models.IntegerField(primary_key=True)

g_userid = models.IntegerField()

g_type = models.CharField(max_length=32)

g_summary = models.CharField(max_length=255)

g_details = models.TextField()

g_location = models.CharField(max_length=255)

g_client = models.CharField(max_length=128)

g_timestamp = models.IntegerField()

g_referer = models.CharField(max_length=128)

class Meta:

db_table = u'g2_eventlogmap'

class G2Externalidmap(models.Model):

g_externalid = models.CharField(max_length=128)

g_entitytype = models.CharField(max_length=32)

g_entityid = models.IntegerField()

class Meta:

db_table = u'g2_externalidmap'

class G2Failedloginsmap(models.Model):

g_username = models.CharField(max_length=32, primary_key=True)

g_count = models.IntegerField()

g_lastattempt = models.IntegerField()

class Meta:

db_table = u'g2_failedloginsmap'

class G2Accessmap(models.Model):

g_accesslistid = models.IntegerField()

g_userorgroupid = models.IntegerField()

g_permission = models.TextField() # This field type is a guess.

class Meta:

db_table = u'g2_accessmap'

class G2Accesssubscribermap(models.Model):

g_itemid = models.IntegerField(primary_key=True)

g_accesslistid = models.IntegerField()

class Meta:

db_table = u'g2_accesssubscribermap'

class G2Albumitem(models.Model):

g_id = models.IntegerField(primary_key=True)

g_theme = models.CharField(max_length=32)

g_orderby = models.CharField(max_length=128)

g_orderdirection = models.CharField(max_length=32)

class Meta:

db_table = u'g2_albumitem'

class G2Animationitem(models.Model):

g_id = models.IntegerField(primary_key=True)

g_width = models.IntegerField()

g_height = models.IntegerField()

class Meta:

db_table = u'g2_animationitem'

class G2Cachemap(models.Model):

g_key = models.CharField(max_length=32)

g_value = models.TextField()

g_userid = models.IntegerField()

g_itemid = models.IntegerField()

g_type = models.CharField(max_length=32)

g_timestamp = models.IntegerField()

g_isempty = models.SmallIntegerField()

class Meta:

db_table = u'g2_cachemap'

class G2Childentity(models.Model):

g_id = models.IntegerField(primary_key=True)

g_parentid = models.IntegerField()

class Meta:

db_table = u'g2_childentity'

class G2Dataitem(models.Model):

g_id = models.IntegerField(primary_key=True)

g_mimetype = models.CharField(max_length=128)

g_size = models.IntegerField()

class Meta:

db_table = u'g2_dataitem'

class G2Derivative(models.Model):

g_id = models.IntegerField(primary_key=True)

g_derivativesourceid = models.IntegerField()

g_derivativeoperations = models.CharField(max_length=255)

g_derivativeorder = models.IntegerField()

g_derivativesize = models.IntegerField()

g_derivativetype = models.IntegerField()

g_mimetype = models.CharField(max_length=128)

g_postfilteroperations = models.CharField(max_length=255)

g_isbroken = models.SmallIntegerField()

class Meta:

db_table = u'g2_derivative'

class G2Derivativeimage(models.Model):

g_id = models.IntegerField(primary_key=True)

g_width = models.IntegerField()

g_height = models.IntegerField()

class Meta:

db_table = u'g2_derivativeimage'

class G2Derivativeprefsmap(models.Model):

g_itemid = models.IntegerField()

g_order = models.IntegerField()

g_derivativetype = models.IntegerField()

g_derivativeoperations = models.CharField(max_length=255)

class Meta:

db_table = u'g2_derivativeprefsmap'

class G2Descendentcountsmap(models.Model):

g_userid = models.IntegerField()

g_itemid = models.IntegerField()

g_descendentcount = models.IntegerField()

class Meta:

db_table = u'g2_descendentcountsmap'

class G2Entity(models.Model):

g_id = models.IntegerField(primary_key=True)

g_creationtimestamp = models.IntegerField()

g_islinkable = models.SmallIntegerField()

g_linkid = models.IntegerField()

g_modificationtimestamp = models.IntegerField()

g_serialnumber = models.IntegerField()

g_entitytype = models.CharField(max_length=32)

g_onloadhandlers = models.CharField(max_length=128)

class Meta:

db_table = u'g2_entity'

class G2Factorymap(models.Model):

g_classtype = models.CharField(max_length=128)

g_classname = models.CharField(max_length=128)

g_implid = models.CharField(max_length=128)

g_implpath = models.CharField(max_length=128)

g_implmoduleid = models.CharField(max_length=128)

g_hints = models.CharField(max_length=255)

g_orderweight = models.CharField(max_length=255)

class Meta:

db_table = u'g2_factorymap'

class G2Filesystementity(models.Model):

g_id = models.IntegerField(primary_key=True)

g_pathcomponent = models.CharField(max_length=128)

class Meta:

db_table = u'g2_filesystementity'

class G2Group(models.Model):

g_id = models.IntegerField(primary_key=True)

g_grouptype = models.IntegerField()

g_groupname = models.CharField(unique=True, max_length=128)

class Meta:

db_table = u'g2_group'

class G2Item(models.Model):

g_id = models.IntegerField(primary_key=True)

g_cancontainchildren = models.SmallIntegerField()

g_description = models.TextField()

g_keywords = models.CharField(max_length=255)

g_ownerid = models.IntegerField()

g_renderer = models.CharField(max_length=128)

g_summary = models.CharField(max_length=255)

g_title = models.CharField(max_length=128)

g_viewedsincetimestamp = models.IntegerField()

g_originationtimestamp = models.IntegerField()

class Meta:

db_table = u'g2_item'

class G2Itemattributesmap(models.Model):

g_itemid = models.IntegerField(primary_key=True)

g_viewcount = models.IntegerField()

g_orderweight = models.IntegerField()

g_parentsequence = models.CharField(max_length=255)